Watch On-Demand

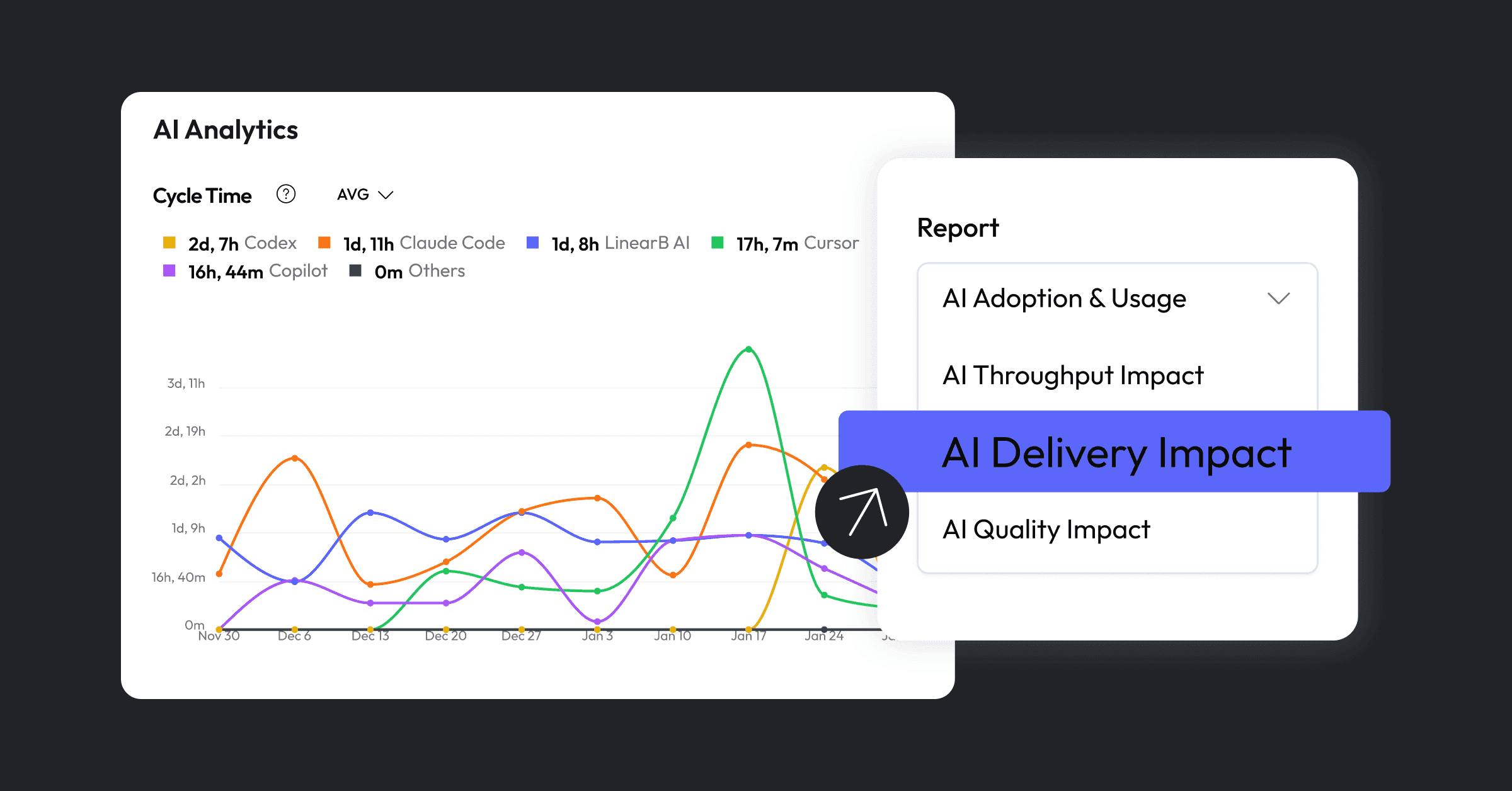

GitHub Copilot here, Cursor there, Claude Code somewhere else. AI across tools is adopted, but is it actually working?

Speakers

Ben Lloyd Pearson

Director, Developer Experience

LinearB

Ofer Affias

Senior Director of Product

LinearB

About the workshop

The real challenge isn’t adoption; it’s understanding what happens after.

In this session, you’ll learn how to connect AI activity to commits, PRs, and delivery outcomes, and walk away with an operating model to prove and scale impact.

Answer whether your AI investments are working:

- How much of your code is AI-assisted, and where is it contributing to code, reviews, and PRs?

- Which tools are making the most impact across your delivery pipeline?

- Which users, teams, and workflows are making the most AI impact?

- Is AI improving throughput, or simply shifting work from coding to review and rework?

Your next read

Workshop

Build vs. buy: Why DIY engineering metrics break at scale

Watch a 35-minute workshop where we'll show where agentic AI breaks down when trying to build an engineering productivity platform that drives improvement.

Guide

How to build your own engineering productivity platform

Do we still need to buy the vendor platforms we’re evaluating, or could a small team and a Claude subscription build the parts we want?

Report

LinearB is a Leader in the 2026 Gartner® Magic Quadrant™ for Developer Productivity Insight Platforms

Get complimentary access to the full report.