The APEX framework

Summary

- Measure AI at the PR level - Track AI-assisted pull requests to connect AI activity to real delivery outcomes, not just tool adoption.

- Four pillars, four north stars - AI Leverage, Predictability, Flow Efficiency, and Developer Experience each have one guiding metric.

- Speed without predictability creates chaos - AI increases output variability, so delivery commitments must be tracked and protected.

- AI shifts bottlenecks downstream - Faster coding often clogs review queues; decompose cycle time to find where velocity gets stuck.

- DevEx is non-negotiable - If developer satisfaction drops as throughput rises, the gains are unsustainable and the model is failing.

Framework overview

The gap between AI adoption and measurable engineering impact requires a new operating model. APEX is a system-level framework that validates whether AI increases throughput while preserving delivery confidence and developer experience.

APEX treats AI as a first-class production contributor, measured in the critical path. It connects engineering metrics to business outcomes, from AI adoption to demonstrating where AI creates sustainable value. It provides structure to minimize engineering risk, burn-out, and inefficiencies that undermine AI investments.

Governing principles

Measure AI in the critical path

If AI isn’t visible at the pull request level, you can’t validate its impact on throughput. Treat AI as a production contributor, not an experiment.

North stars over metric volume

Four key outcomes, not dozens of signals. Each pillar has a single north star metric that anchors decisionmaking. Secondary metrics exist for deeper diagnostics.

Predictability before velocity

Higher speed with low delivery confidence creates chaos. APEX elevates predictability as a primary outcome to ensure AI-driven work doesn’t disrupt commitments.

Flow exposes constraints

Metrics should reveal where work stops, not just how fast it moves. When AI accelerates coding, bottlenecks shift downstream. Metrics on flow efficiency make those shifts visible.

DevEx is a guardrail

Developer experience is non-negotiable. If satisfaction drops, throughput gains are illusory. Sustainable productivity requires happy and effective teams.

Download your free copy of the guide

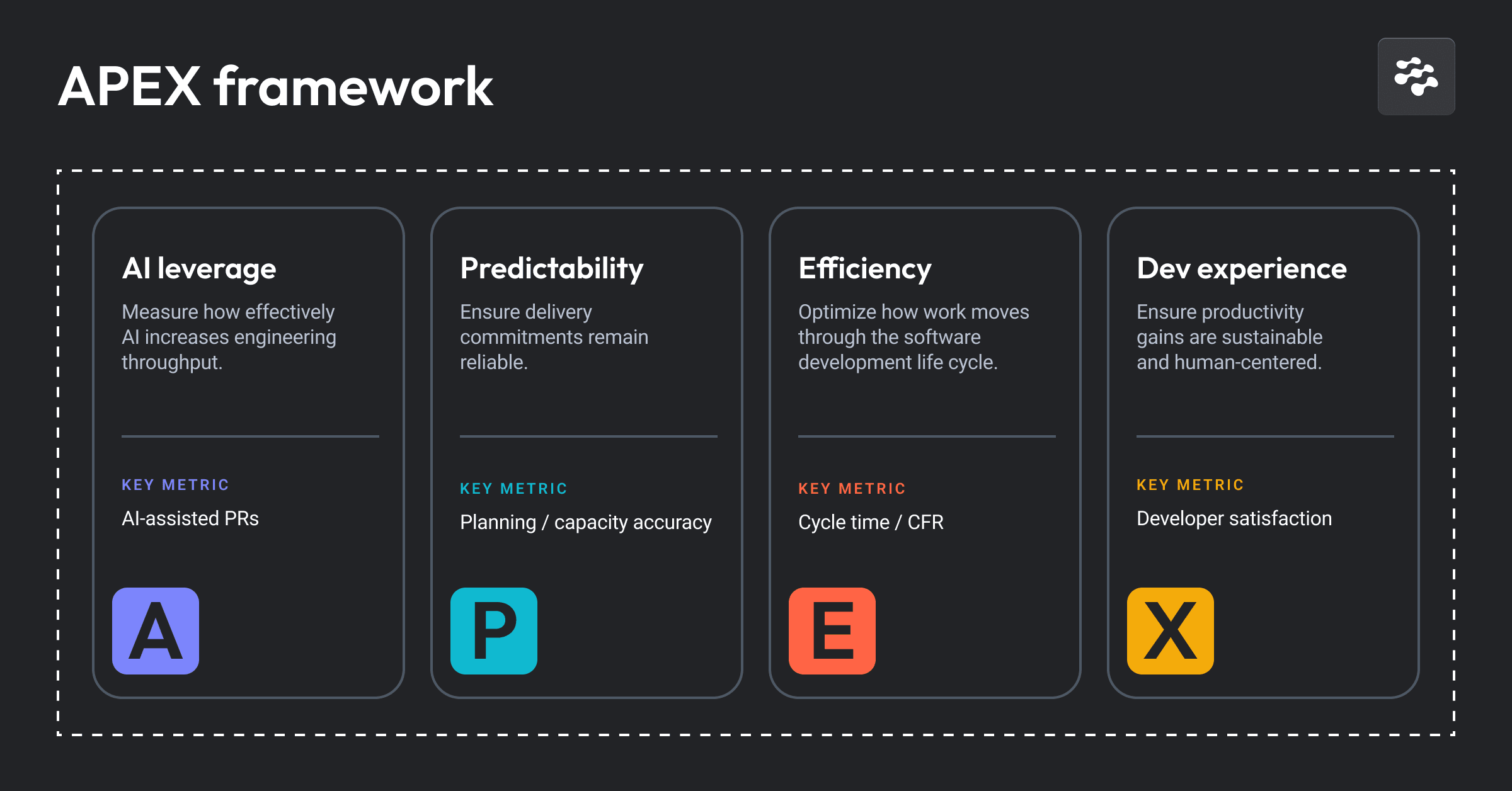

APEX pillars

North stars and diagnostics

APEX organizes measurement around four pillars, each with a north star metric and supporting diagnostics. Together, they answer whether AI is improving throughput sustainably.

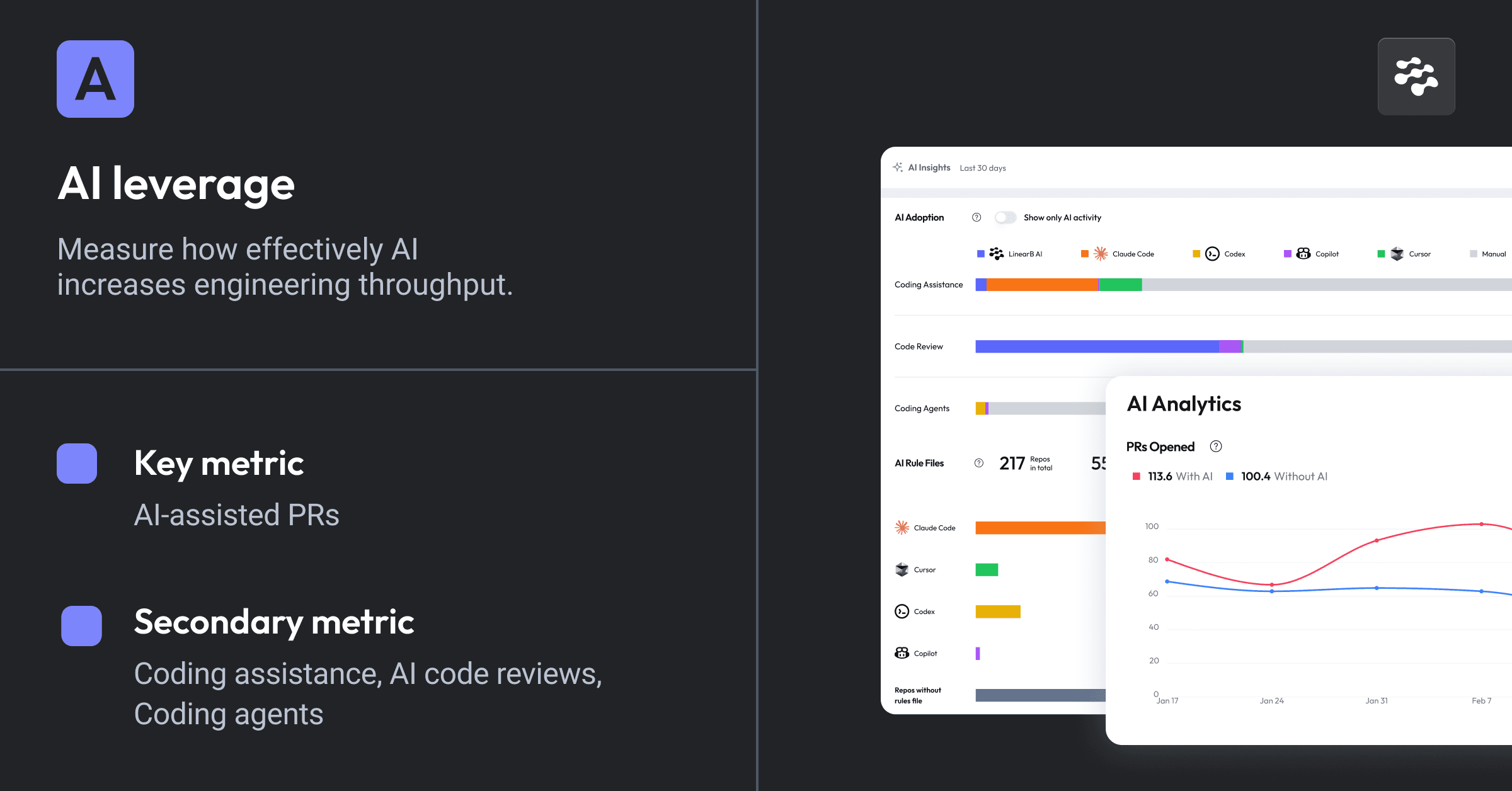

Pillar A: AI leverage

• Objective: Measure how effectively AI and automation are embedded in production workflows to increase throughput.

• North star metric: AI-assisted pull requests

• Secondary metric: Human-AI contribution ratio (phase-level visibility)

A pull request qualifies as AI-assisted when AI contributes at any stage, whether through code generation (co-authored commits), automated review comments, or agentic PR authorship.

Tracking at the PR level captures real production impact rather than tool usage alone, because it connects AI activity to the unit of work that moves through the delivery system.

The human-AI contribution ratio breaks down where AI contributes across workflow phases, including coding, review, and deployment. The ratio reveals whether AI leverage is concentrated in a single phase or distributed across the delivery lifecycle. Concentration matters because different phases carry different stability profiles, and knowing where AI contributes helps diagnose quality issues when they arise.

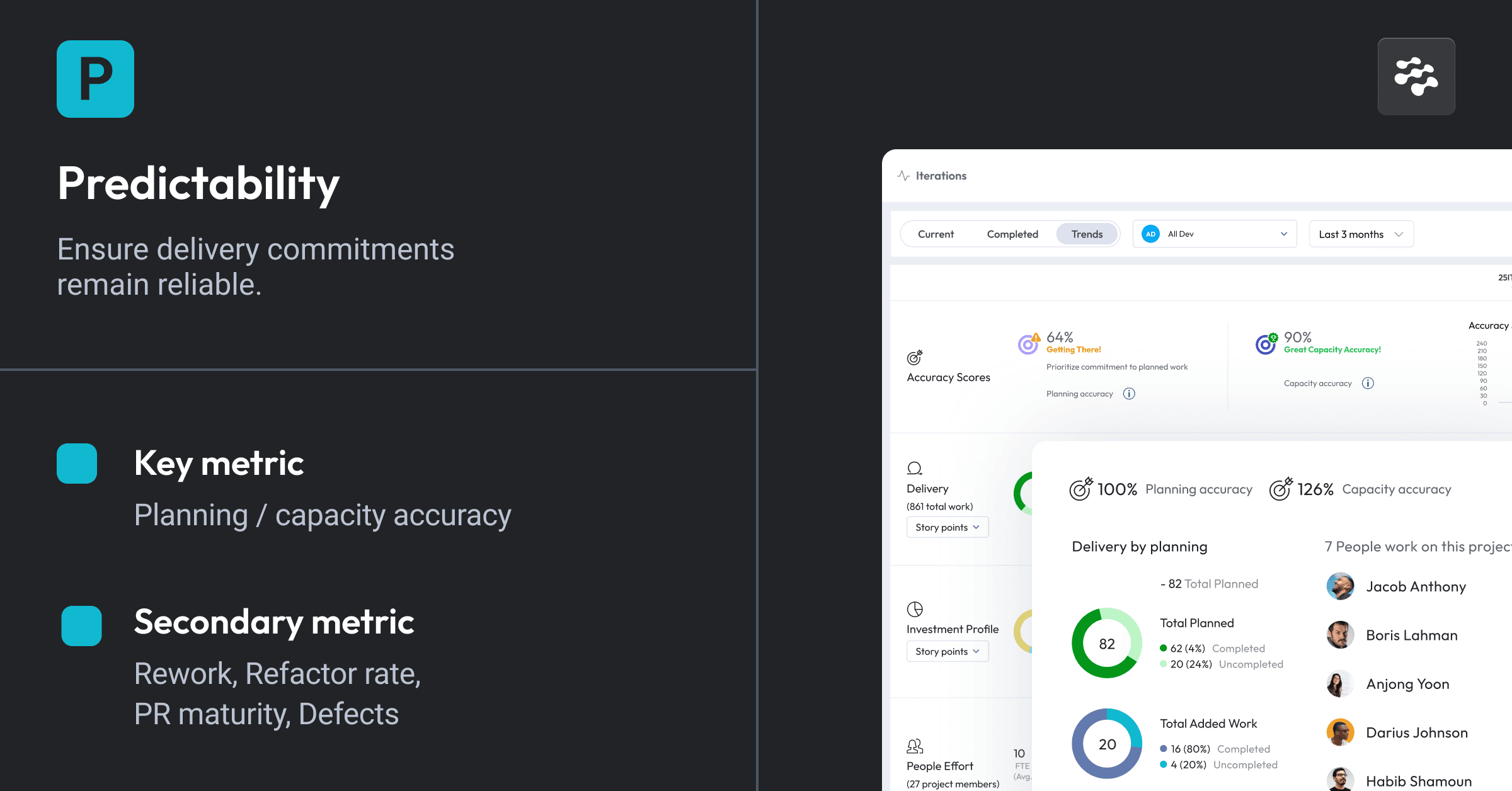

Pillar P: Predictability

• Objective: Ensure delivery commitments are reliable and changes do not introduce instability

• North star metric: Planning accuracy and capacity accuracy

• Diagnostics: Quality and stability indicators (CFR, rework, defects) as leading indicators

AI increases output variability. Predictability metrics ensure that speed doesn’t break trust with stakeholders who depend on reliable commitments.

Quality metrics like change failure rate, rework, and defects are treated as diagnostics that explain why predictability failed. Achieving high capacity accuracy proves that AI-induced velocity is real and plannable.

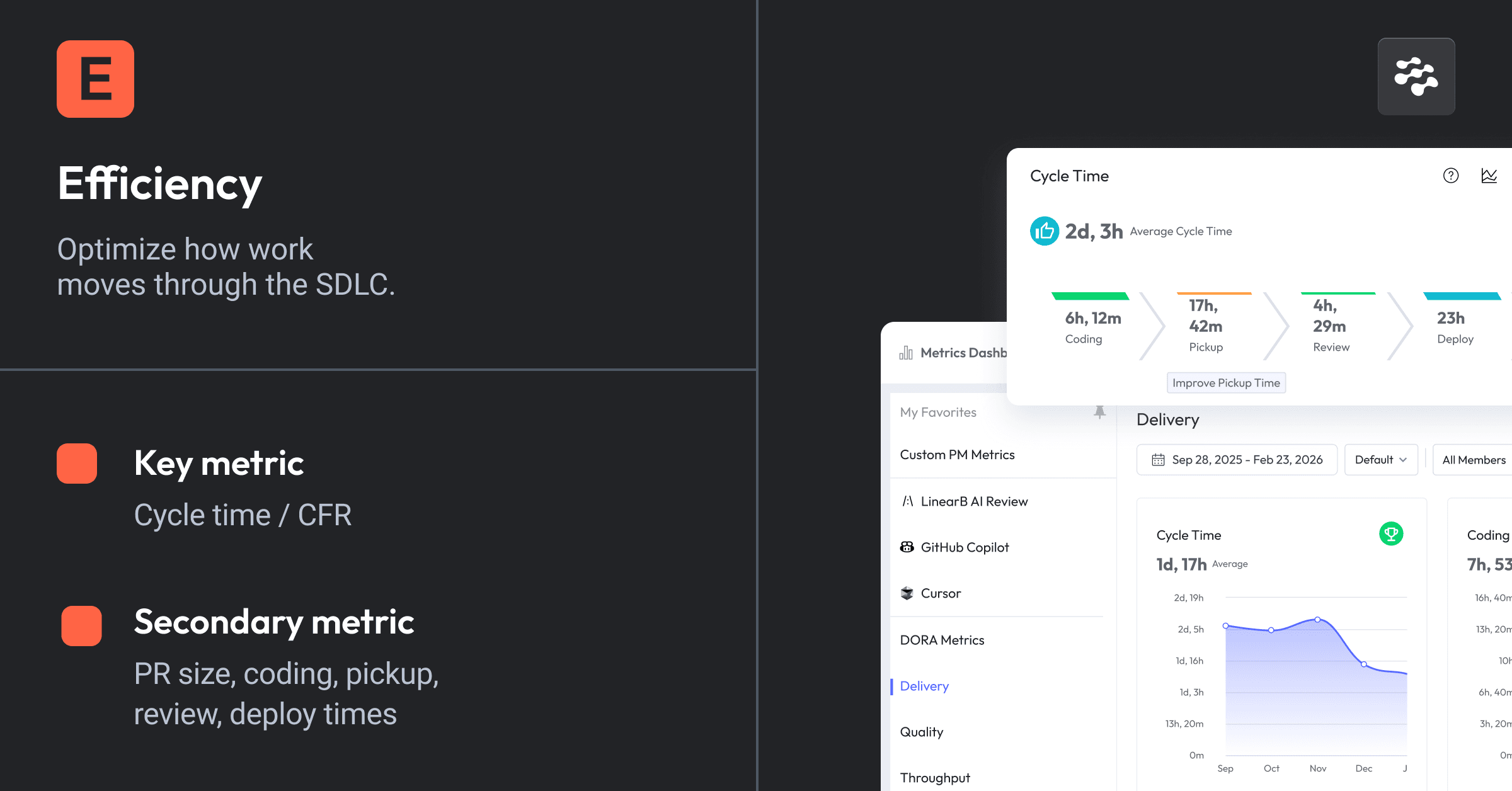

Pillar E: Flow efficiency

• Objective: Optimize how smoothly work moves from start to merge, reducing friction and hidden queues.

• North star metric: Cycle time, Change failure rate (CFR)

• Diagnostics: Pickup time, review time, PR size

Cycle time is the primary indicator for system throughput. It represents the end-to-end efficiency of the delivery system. CFR shows if your team is maintaining software quality while efficiency increases.

The downstream effect of AI is that accelerating coding often shifts bottlenecks to review or pickup times. If coding time drops due to AI but review time spikes, the system hasn’t improved. Instead, the constraint has moved. Decompose cycle time to find where AI velocity is getting stuck.

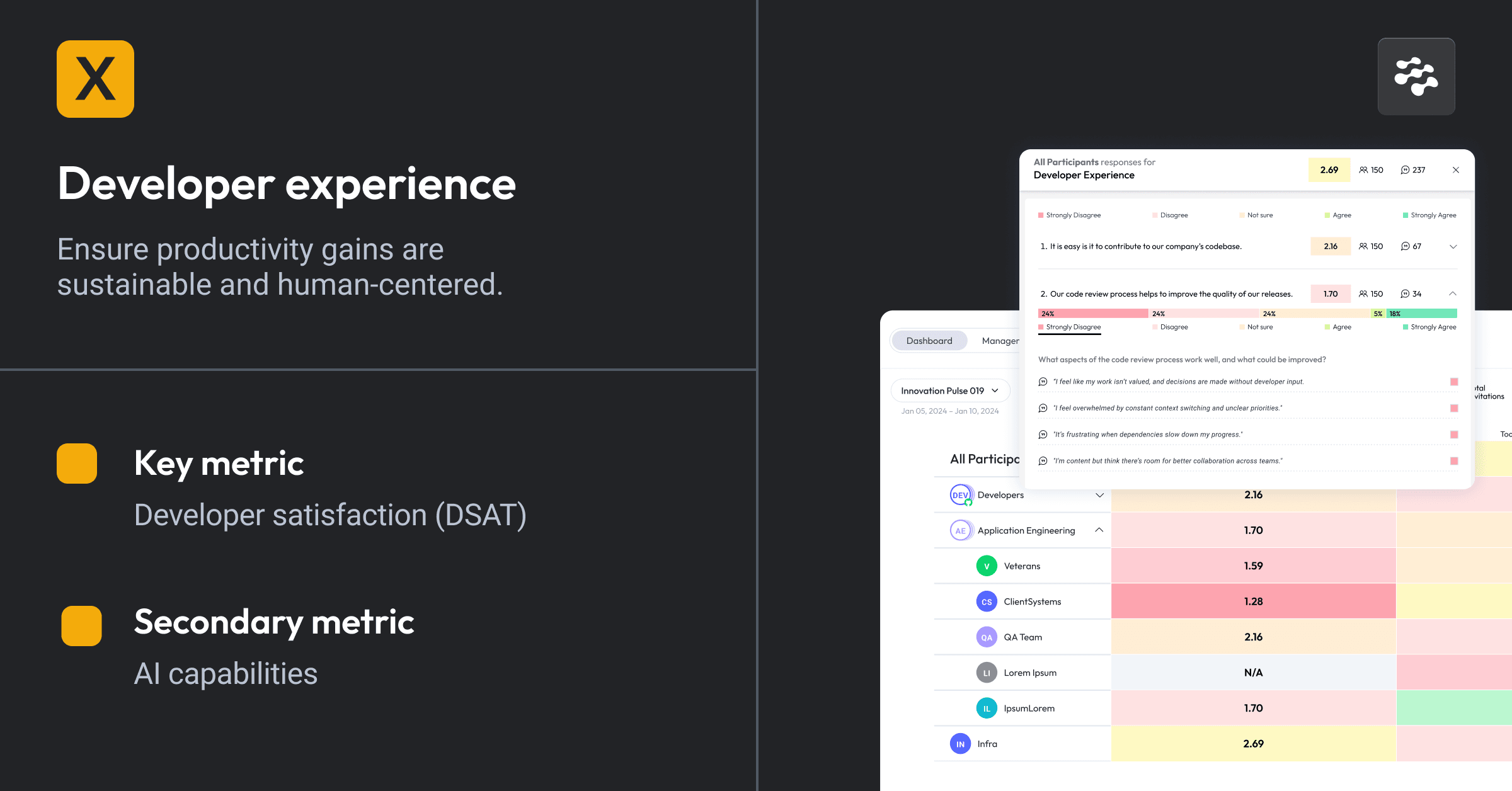

Pillar X: Developer experience (DevEx)

• Objective: Ensure productivity gains are sustainable and human-centered

• North star metric: Developer satisfaction (survey-based)

• Diagnostics: DORA’s seven AI readiness capabilities (survey dimensions)

Developer experience is a guardrail. If cycle time improves but satisfaction drops, the model is failing. Throughput gains achieved through burnout are not sustainable.

The human side of AI adoption

APEX incorporates DORA’s AI readiness capabilities as diagnostic survey questions. Combine quantitative flow data with qualitative survey data to get the full picture of whether AI adoption is sustainable.

Clear and communicated AI stance

The degree to which engineering teams understand approved AI tools, usage policies, and experimentation boundaries. Ambiguity in AI policy creates friction and slows adoption.

Healthy data ecosystems

The quality, accessibility, and integration of internal data systems. Teams that struggle to locate or trust their data face compounding inefficiencies that erode satisfaction.

AI-accessible internal data

The degree to which AI tools can connect to and leverage organizational context, including documentation, knowledge bases, and project history. Without this access, AI output may be syntactically correct but operationally irrelevant.

Strong version control practices

The discipline of frequent, small commits and the operational capability to roll back changes quickly. These practices reduce risk per change and support confidence in faster delivery.

Working in small batches

The practice of breaking changes into manageable units with fewer lines per commit, fewer changes per release, and shorter task completion times. Small batches reduce review burden and improve predictability.

User-centric focus

The extent to which teams connect their work to user outcomes and business value. Teams that understand this connection report higher engagement and purpose.

Quality internal platforms

The reliability, documentation, and usability of shared capabilities and services. Poor internal tooling is a persistent source of developer friction that undermines satisfaction regardless of AI investment.

Download your free copy of the guide

Implementation and operating cadence

APEX is designed for fast rollout and continuous course correction, with a recommended operating cadence that connects each pillar to the right review interval.

Every organization operates differently. Sprint lengths vary, team sizes differ, and enterprise cadences don’t match startup cadences. The recommendations below represent our general best practices, but they should be adapted to fit your organization’s delivery rhythm and maturity.

A fast-moving team may review flow metrics weekly. A large enterprise may run DevEx surveys semi-annually. The principle matters more than the frequency: each pillar needs a regular review cycle that drives decisions.

Getting Started

All four APEX pillars can be instrumented from the start. You don’t need to roll them out sequentially. Start with the metrics that matter the most to your organization and expand from there.

For most teams, AI leverage and flow efficiency are the fastest to baseline because they rely on data already flowing through your development tools. Instrument AI adoption at the PR level and capture your cycle time breakdown to establish where you stand today. Developer experience requires running your first survey, which takes more coordination but provides the qualitative baseline that quantitative metrics alone cannot. Predictability metrics build on top of your existing planning process. Once you’re tracking planning accuracy or capacity accuracy against sprint commitments, you have the feedback loop to start improving.

The goal is to move from baselining to an active operating cadence as quickly as possible. Pick your starting point, establish the cadence, and start making decisions based on what the data shows.

Recommended operating cadence

Weekly — AI leverage

Review AI leverage metrics weekly to investigate trends and changes across tools, teams, individuals, and repos. Weekly visibility into AI adoption at the PR level catches emerging patterns early, whether that’s a team where adoption has stalled, a repo where AI-assisted work is concentrated, or a shift in the human-aI contribution ratio that warrants investigation. Weekly reviews are lightweight; the goal is to monitor trends and detect signals early rather than doing a deep analysis. Some organizations may prefer biweekly or monthly reviews of AI leverage depending on the pace of their AI rollout. Early in adoption, weekly reviews help build momentum. Once adoption stabilizes, monthly may be sufficient.

Every sprint — predictability

Review predictability metrics at the end of each sprint or iteration. Assess planning accuracy and capacity accuracy against your targets and adjust plans for the next sprint based on what the data shows. Track quality diagnostics like change failure rate, rework, and defects as leading indicators that explain when and why predictability breaks down. If AI is generating volume that teams cannot reliably commit to or ship, this is where you’ll see it. Sprint-level review is the natural cadence for predictability because it aligns with the commitment cycle. Teams that review predictability only monthly lose the feedback loop that makes sprint planning improve over time. Organizations using continuous delivery without fixed sprints should still establish a regular interval to assess whether delivery commitments are holding.

Monthly — flow efficiency

Review flow efficiency metrics monthly to identify systemic bottlenecks and adjust your SDLC and engineering practices accordingly. Decompose cycle time into its phases to find where AI-driven velocity gains are getting absorbed by downstream constraints. Monthly cadence gives you enough data to distinguish real trends from noise and enough time to implement meaningful process changes between reviews. Organizations experiencing rapid change or actively rolling out new AI tooling may benefit from weekly flow reviews during those transitions. The key is that flow metrics should drive action — if cycle time hasn’t improved despite rising AI adoption, monthly review forces you to investigate why and respond with SDLC or process changes rather than waiting for the problem to compound.

Quarterly — developer experience

Run an org-wide developer experience survey quarterly to monitor team health, satisfaction, and AI readiness. Use the results to build an improvement roadmap for the next quarter. DevEx surveys are more intensive than metrics reviews because they require participation across the organization and produce qualitative data that takes time to analyze and act on. A quarterly cadence balances the need for regular sentiment data against survey fatigue. We recommend quarterly as a starting point. Fast-moving organizations with smaller teams may find value in more frequent pulse surveys. Larger enterprises where survey coordination is more complex often find semi-annual cadences more practical. What matters is that DevEx is measured regularly enough to serve as an effective guardrail. If satisfaction is dropping, you need to know before throughput gains erode.

Download your free copy of the guide

Operationalize APEX today

AI usage is universal among software engineering teams, now the challenge is understanding whether AI adoption is creating sustainable value. APEX provides the answer by connecting AI leverage to the outcomes that matter, predictable delivery, efficient flow, and sustainable developer experience.

APEX treats AI as a production contributor by measuring AI at the pull request level and tracking its impact across the entire delivery system. APEX reveals whether faster code generation translates into faster delivery, or shifts bottlenecks downstream. The four north star metrics create clarity on where to focus improvement.

But measurement alone doesn’t drive improvement. APEX operationalizes productivity through weekly, monthly, and quarterly reviews that force decisions at the right intervals. This cadence prevents metric sprawl and ensures engineering investments align with business outcomes executives can trust.

LinearB is the only platform purposebuilt to implement APEX at scale. It provides the instrumentation to track AI leverage across 50+ tools, the workflow automation to eliminate the bottlenecks AI exposes, and an integrated view of metrics and developer sentiment that reveals whether productivity gains are sustainable. Organizations using LinearB prove where AI is increasing throughput while protecting delivery confidence and developer experience.

The AI era demands a new operating model for engineering productivity. APEX provides the framework, and LinearB provides the platform to execute it.