AI in software engineering has reached near-universal adoption, but adoption alone doesn't guarantee results. According to LinearB's 2026 Engineering Benchmarks Report, which analyzed 8.1 million pull requests across 4,800 teams in 42 countries, 88.3% of developers now use AI regularly, up from just under 72% in early 2024. Yet beneath these adoption numbers lies a more complex story about how AI-generated code actually moves through engineering workflows and whether it delivers meaningful productivity gains.

While AI accelerates code generation at unprecedented scale, the data reveals significant bottlenecks in review cycles, acceptance rates, and code quality that prevent many organizations from realizing the productivity benefits they expected. For VPs of Engineering, Directors of Developer Experience, and CTOs evaluating their AI strategies, understanding these patterns is essential to moving from experimentation to measurable business outcomes.

Why AI adoption metrics hide real engineering impact

The 2026 benchmark report represents a fundamental expansion in how engineering organizations should evaluate AI effectiveness. Rather than relying solely on traditional delivery metrics like cycle time or throughput, the report introduces a new dimension focused specifically on AI productivity measurement, combining large-scale pull request analysis with qualitative insights from engineering leaders about their lived experience with AI adoption.

This dual-lens approach reveals a crucial gap between perception and reality. High adoption rates create an illusion of progress, but they don't prove value creation. Teams implementing AI tools must shift their measurement focus from usage volume to production outcomes.

The report frames AI productivity as an end-to-end systems question rather than a point solution. Using an AI readiness matrix adapted from DORA research, the analysis assesses whether organizations have built the operational foundations necessary for meaningful gains. This includes evaluating version control maturity, delivery habits, internal tooling reliability, and platform stability, all the infrastructure that determines whether AI amplifies organizational strengths or magnifies existing weaknesses.

Two prerequisites emerge as particularly critical: data quality for AI adoption and AI policy alignment. Approximately 65% of surveyed organizations report that their data is not ready for AI models, creating conditions where hallucinations, incorrect recommendations, and context-free suggestions erode trust. Without dependable data that provides accurate context about internal libraries, architectural standards, and approved patterns, AI defaults to generating more code than necessary rather than integrating thoughtfully with existing systems.

The measurement discussion must also extend beyond speed to encompass technical debt and code quality. As leaders evaluate whether AI improves maintainability, security, and business impact, they need visibility into whether AI-generated code introduces new vulnerabilities, increases surface area for bugs, or creates maintainability challenges that will burden teams for years. The data shows AI is almost exclusively generating new code rather than refactoring legacy systems, raising important questions about long-term technical health.

AI-generated pull requests create larger changes and more new code

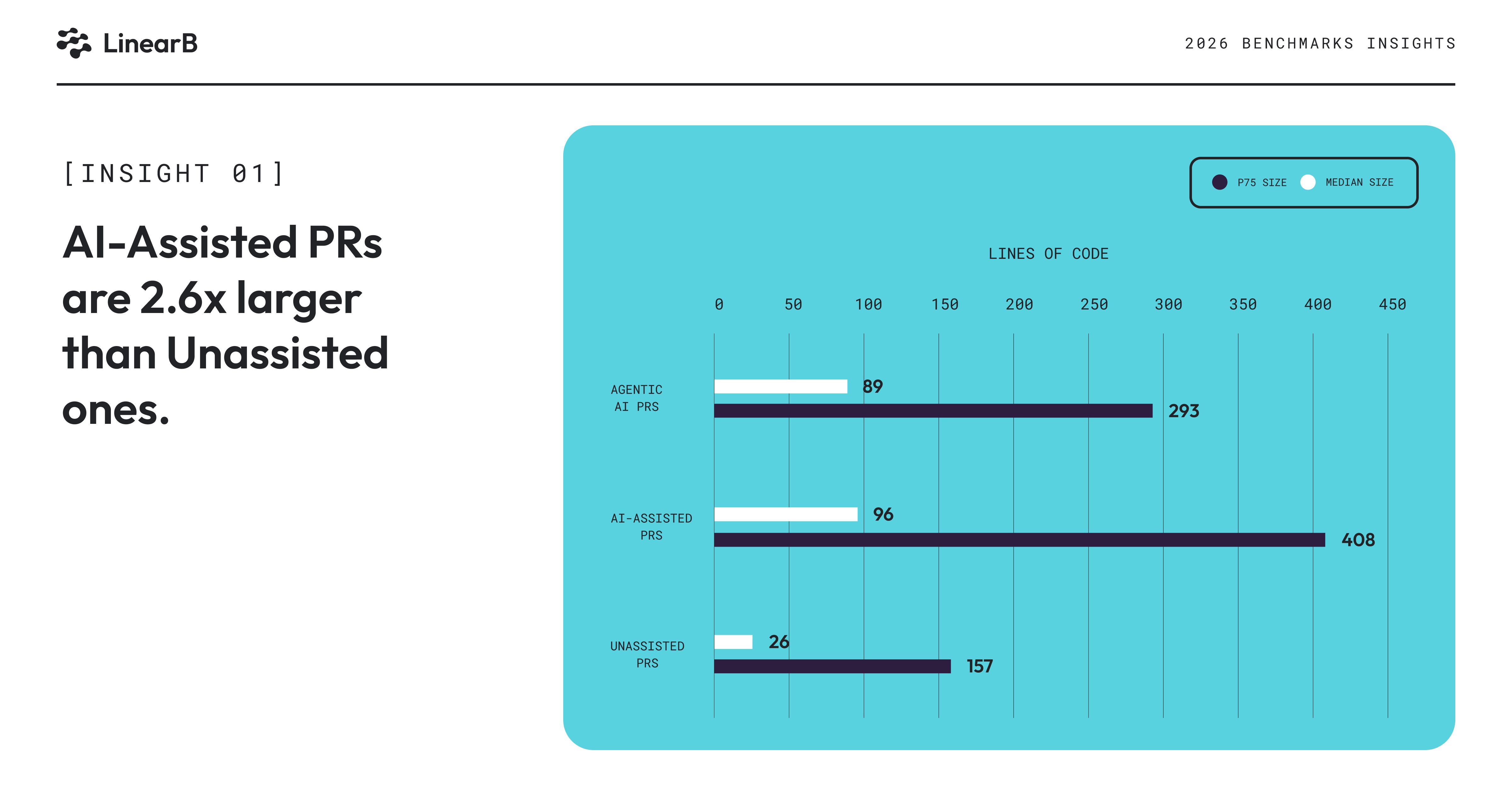

Not all AI-generated code behaves the same way in production workflows. The report distinguishes among three distinct categories: unassisted pull requests (authored entirely by humans without AI), AI-assisted pull requests (where developers use AI tools to significantly shape their work), and fully agentic pull requests (created entirely by AI agents like Claude or Codex). These classifications reveal materially different workflow patterns that engineering leaders must understand to set realistic expectations and design appropriate processes.

The size difference is striking and consequential: AI-assisted PRs tend to be about two and a half times larger than unassisted PRs. Looking at the 75th percentile, AI-assisted pull requests contain over 400 lines of code compared to 157 lines for unassisted work. Agentic pull requests fall in the middle at approximately 290 lines.

This expansion creates immediate downstream effects: larger changes increase cognitive load for reviewers, touch more parts of the system, and introduce higher complexity that makes thorough evaluation more difficult. LinearB has long recommended keeping pull requests below 300 lines because that represents a manageable chunk of information that humans can effectively hold in working memory, a threshold that AI-assisted work routinely exceeds.

The data also reveals a critical insight about what AI generates: AI is almost exclusively generating new code. When examining refactor rates, which represent the percentage of code in a pull request that modifies existing code rather than creating new paths, unassisted PRs show approximately 37% refactoring at the 75th percentile. AI-assisted pull requests show nearly zero.

AI is not helping teams pay down technical debt or improve legacy code quality; instead, it's expanding codebases with new implementations that may duplicate functionality, ignore established internal libraries, or bypass architectural patterns the team has standardized on.

This pattern points directly to the concept of context engineering. Without strong guidance about internal libraries, architectural standards, and approved implementation paths, AI tools default to what's easiest for them: generating fresh code from scratch. Code generation is essentially free for an LLM, it doesn't experience the mental taxation that human developers face when writing complex logic. As a result, AI tends to be verbose, often implementing more than the scope of what was requested, and creating additional surface area that teams must now maintain.

Organizations that want AI to contribute meaningfully to their codebase health must invest in providing granular context: which libraries to use for specific functions, how to access shared components, what security requirements apply to different data types, and when to refactor versus rebuild. Without this context layer, AI will continue generating net-new code that increases system complexity rather than reducing it.

Agentic pull requests introduce an additional layer of complexity around ownership and trust. When a human developer submits work, there's clear accountability and a social contract with reviewers. When an AI agent opens a pull request, that ownership becomes ambiguous. Reviewers don't know who to ask questions, don't feel the same urgency to help a colleague ship their work, and face uncertainty about how much scrutiny to apply. These dynamics make agentic work harder to prioritize within normal team workflows, contributing to the significant delays the data reveals.

Why most AI-generated pull requests never reach production

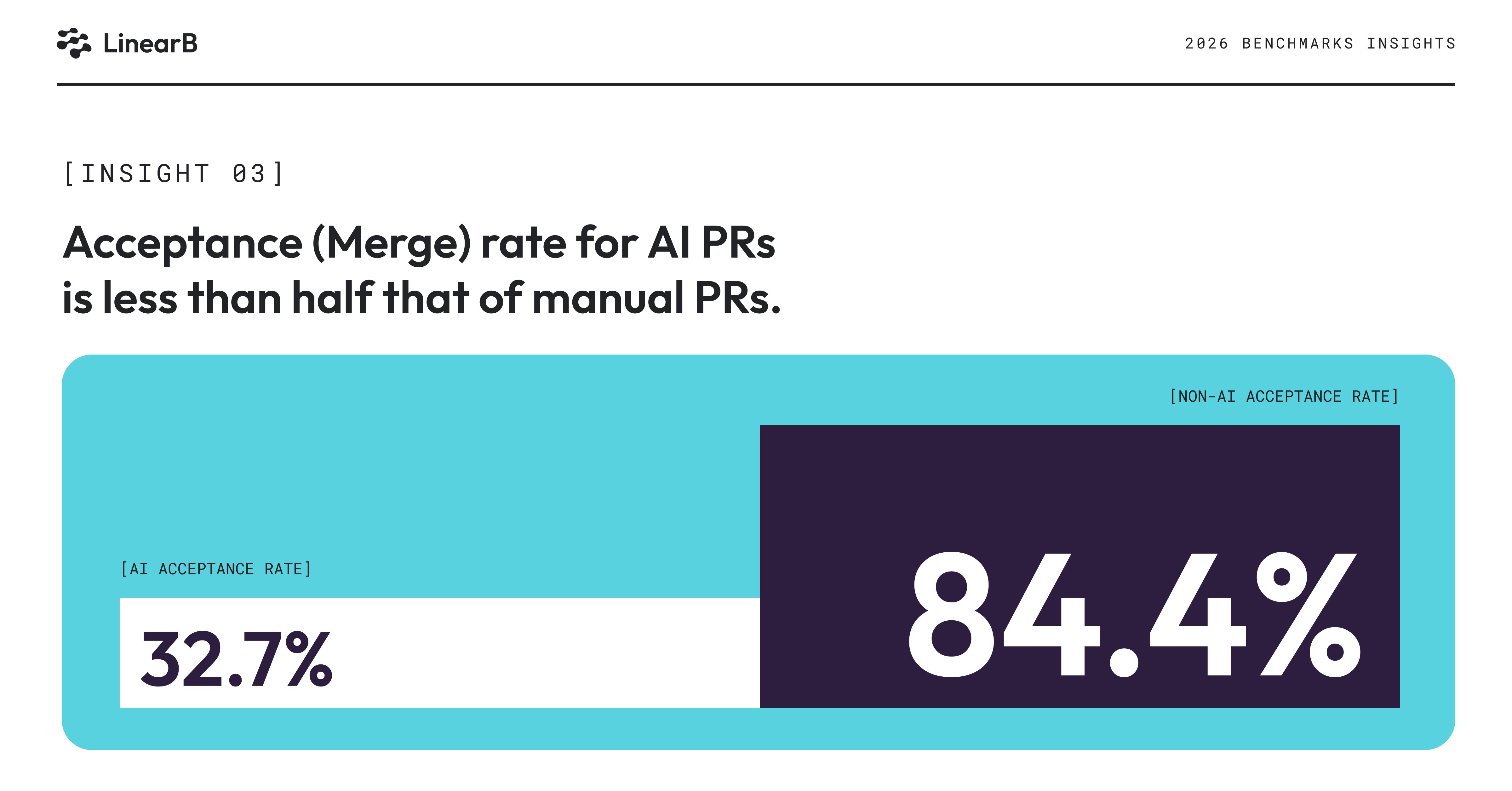

Traditional AI productivity metrics focus on suggestion acceptance, what percentage of AI recommendations developers accept in their editor. But this metric is fundamentally misleading because it measures a proposal, not production impact. A developer might accept an AI suggestion, then delete half of it during refinement, or the entire pull request might never merge. To capture whether AI-generated work actually delivers value, the benchmark report introduces a more meaningful measure: pull request acceptance rate. This measures the percentage of pull requests that get merged within 30 days.

This metric tracks whether AI-generated code survives the complete gauntlet of review, testing, and governance to reach production, the only stage where it can create business value. The results reveal a stark performance gap that should concern every engineering leader investing in AI tooling.

Manual, unassisted pull requests merge at approximately 84.5%, a healthy rate indicating that when experienced developers propose changes, they generally understand requirements, follow standards, and produce work that teams accept. AI-generated pull requests, by contrast, merge at just 32.7%, less than half the rate of human-authored code.

This gap exposes the difference between code generation velocity and actual delivery impact. AI can produce vast volumes of code quickly, but if most of that code never reaches customers, the productivity gains are illusory. The low acceptance rate suggests several possible failure modes: AI-generated code may not meet quality standards, may solve the wrong problem, may introduce security or maintainability concerns that block merge, or may simply represent speculative work that teams abandon when priorities shift.

The metric also highlights the importance of measuring AI productivity through an end-to-end systems lens. Widespread AI use is not yet translating into equivalent delivery impact because downstream processes, including code review, testing, security scanning, and architectural review, were designed for human-authored code at human scale. When AI floods these gates with larger, more numerous pull requests, bottlenecks emerge that prevent work from flowing through to production.

Leaders should pair acceptance rate with broader operational indicators to diagnose where their AI strategy is breaking down. Are AI-generated pull requests failing review because of quality issues? Are they sitting unmerged because teams lack capacity to evaluate them? Are they being abandoned because the original requester moved on before the work completed? Each pattern points to different interventions: better context engineering, adjusted review processes, clearer ownership models, or more selective application of AI to high-value scenarios.

Code review delays erase AI productivity gains

The journey from code generation to production deployment depends critically on code review, and this is where AI-generated work encounters its most significant obstacles. The data reveals a dramatic bottleneck at the review pickup stage, the time between when a pull request is opened and when a reviewer begins evaluating it:

AI-generated pull requests wait more than 16 hours on average before a reviewer picks them up, compared to approximately 200 minutes for unassisted work, a delay more than five times longer. This hesitation stems from multiple factors that qualitative survey responses help illuminate. Reviewers face uncertainty about the mental load required to evaluate AI work, concerns about whether the code will contain errors or missing context, and ambiguity about ownership that reduces the social motivation to help a colleague ship their work.

The larger size of AI-assisted pull requests compounds this reluctance. A 400-line change touching multiple parts of the system requires significantly more cognitive effort to review thoroughly than a focused 150-line change. Reviewers must understand more context, trace through more logic paths, and consider more edge cases. When that investment is required for code that may have been generated without deep understanding of system constraints, reviewers rationally delay engaging until they have sufficient time and mental energy.

Paradoxically, once review begins, AI-generated pull requests move through the review cycle faster, approximately 194 minutes compared to 252 minutes for manual work. But this speed should not be interpreted as a positive signal. Instead, it likely reflects superficial validation rather than thorough scrutiny:

When reviewers finally engage with AI-generated code, they often scan for high-level issues rather than deeply understanding the change. This shallow inspection allows them to complete reviews quickly, but it also means that defects, security vulnerabilities, and maintainability problems slip through to production. Survey respondents expressed concern that a larger amount of code is slipping into production without proper review, a pattern that creates technical debt and risk that teams will pay for over time.

The faster review cycle may also reflect reviewer fatigue and resignation. When faced with a large AI-generated change that would require hours to properly evaluate, reviewers make pragmatic decisions to focus on obvious problems and trust that automated checks caught deeper issues. This dependency on automated review without deep human scrutiny is good when you have robust protections throughout the SDLC. But without this, you generate significant risk, which is particularly dangerous for security, defects, and architectural consistency, all areas where AI models frequently make mistakes that static analysis tools miss.

These bottlenecks position code review as the critical constraint in AI-assisted development. AI has accelerated code generation far faster than organizations have improved the surrounding controls and processes. To realize productivity gains, teams need better standards for what AI should generate, clearer workflows for how to handle AI-originated work, and stronger quality guardrails that catch issues before code reaches production.

Engineering leaders should focus on three interventions. First, provide AI tools with better context so they generate code that's closer to merge-ready quality. Second, redesign review processes to handle the volume and characteristics of AI-generated work, potentially including specialized review tracks or enhanced automation. Third, strengthen quality gates through better automated testing, security scanning, and architectural validation so that reviewers can focus on higher-level concerns rather than catching basic errors. The LinearB AI Insights dashboard helps teams correlate AI adoption with delivery outcomes, surfacing exactly where the breakdowns occur in their own workflows.

The fundamental insight is that improving engineering productivity with AI is a systems problem, not a tooling problem. Until organizations adapt their entire SDLC to work effectively with AI-generated code, adoption rates will continue to outpace impact.