Engineering leaders are navigating uncharted territory as AI tools are proliferating across teams and developer workflows. Everyone is trying to figure out the same thing: Is actually working? How's it impacting delivery outcomes?

With LinearB, you can track adoption across 50+ AI tools and measure downstream impact, correlating AI activity to commit, pull requests (PRs), and delivery. Together with our new APEX framework for measuring AI engineering productivity, we provide you the necessary data and operational model to prove and scale impact.

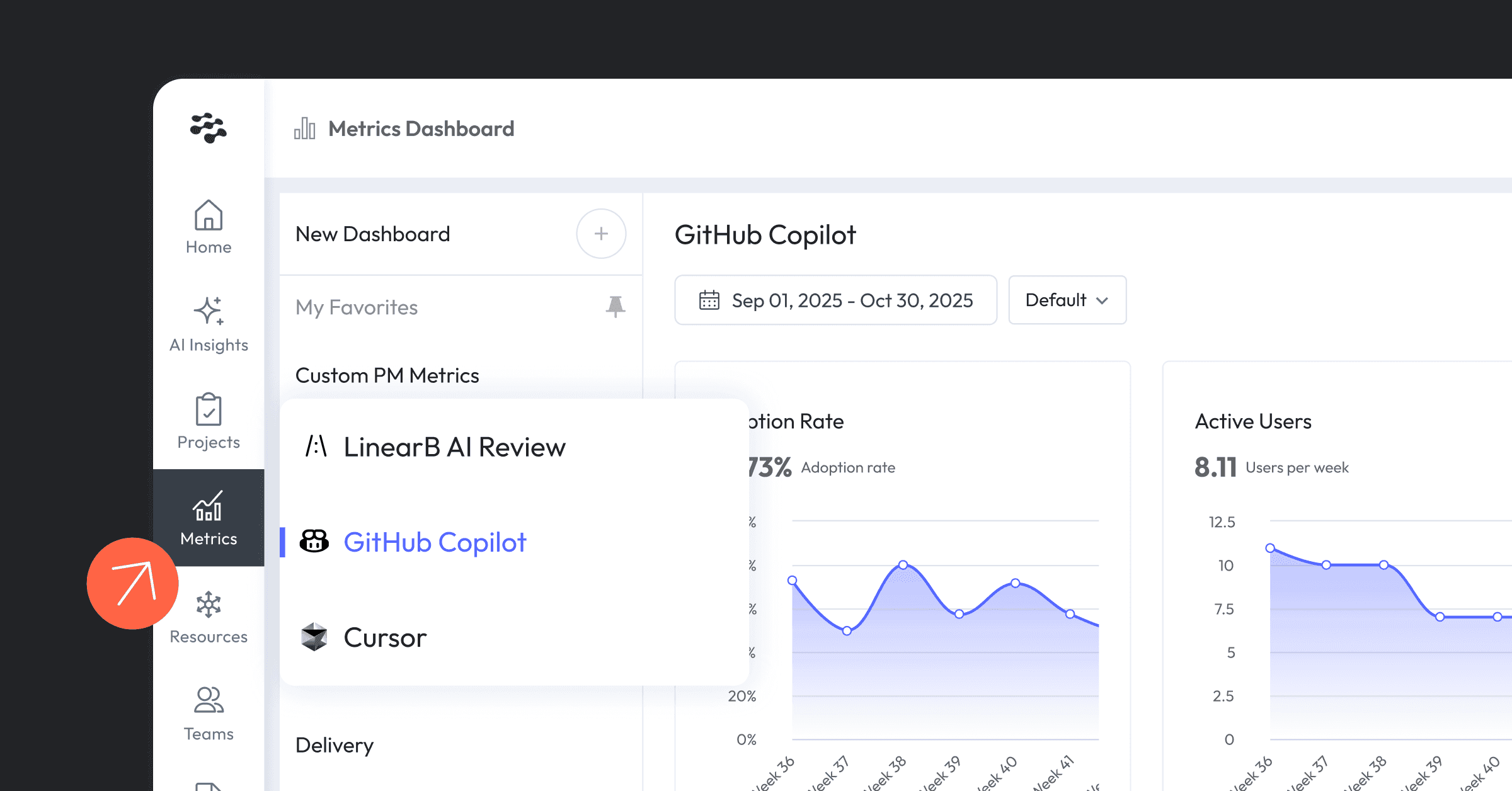

Track AI adoption across workflows, tools, teams

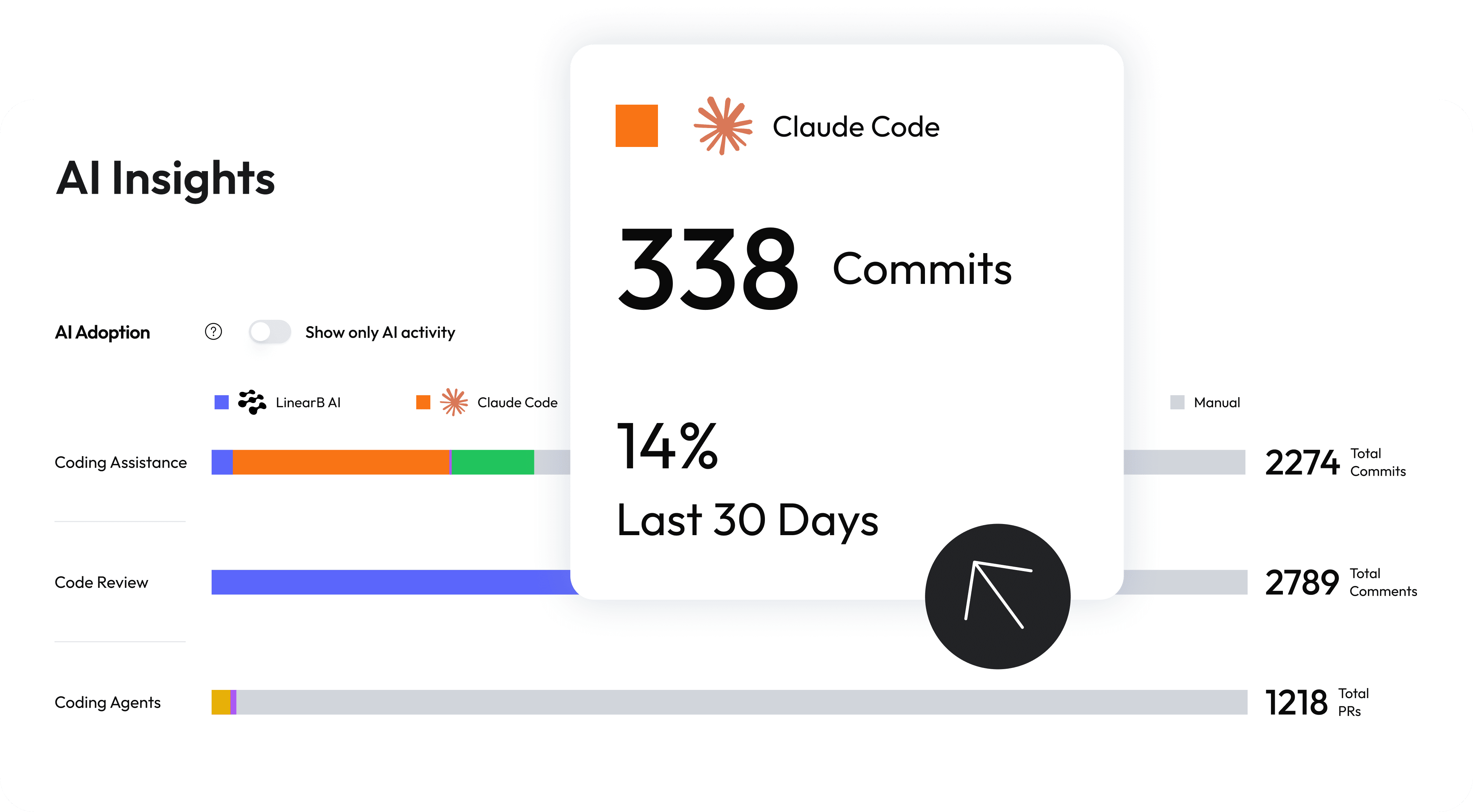

Before jumping to impact, you'll want to get a clear baseline for adoption across AI tools, grounded in actual engineering work from your team. With no local installation, get a snapshot of AI activity through pattern matching across Git signals and direct API integrations.

With complete visibility into your AI tool landscape, measure adoption across coding assistance, code reviews, and coding agents, and track where AI agent config files are guiding IDEs and CI actions.

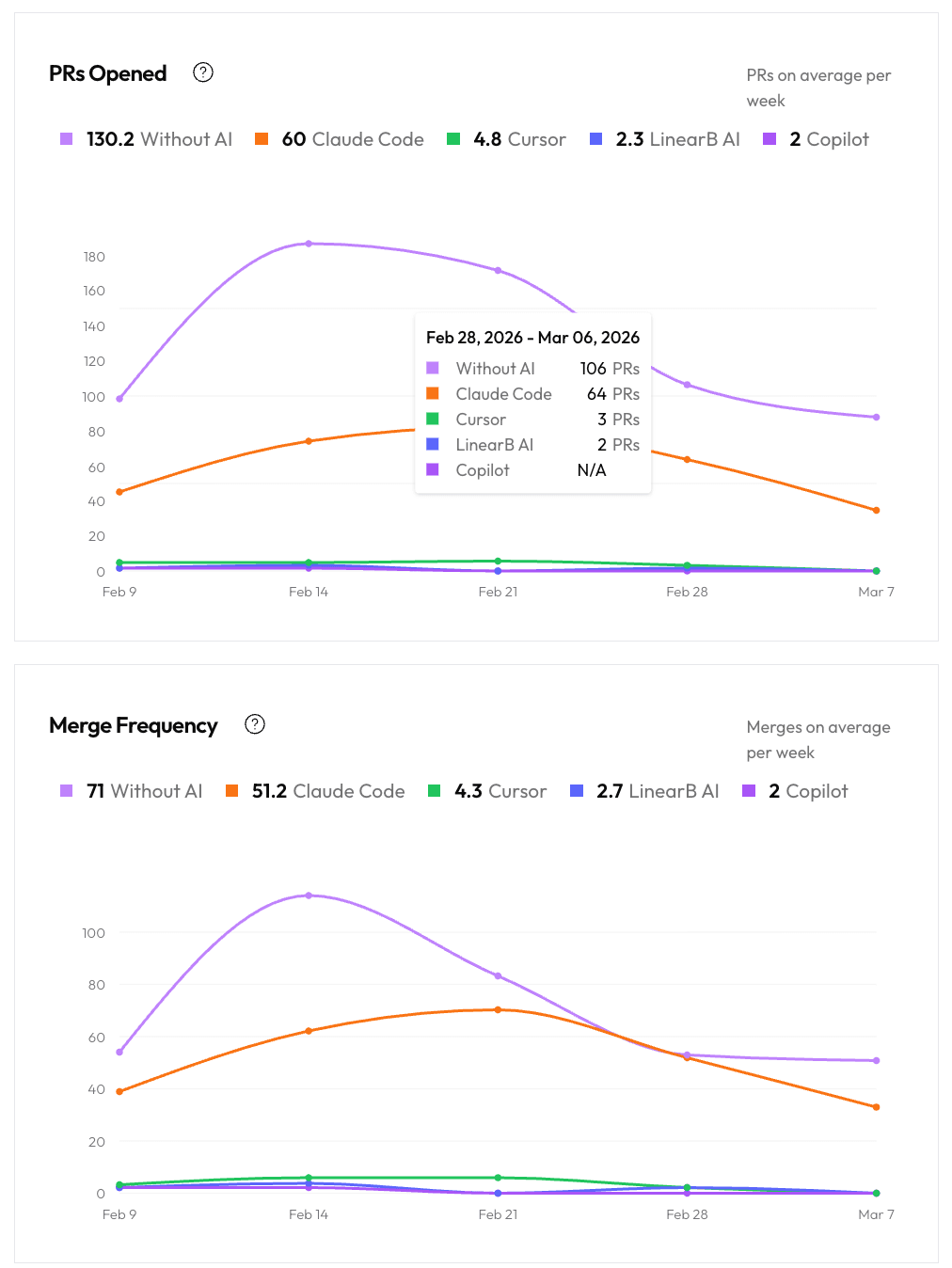

For tools with direct API integration, dive deeper into usage by tracking and comparing PR trends that involve AI assistance versus not, and drill into adoption by tool, team, or user.

Understand how AI impacts delivery

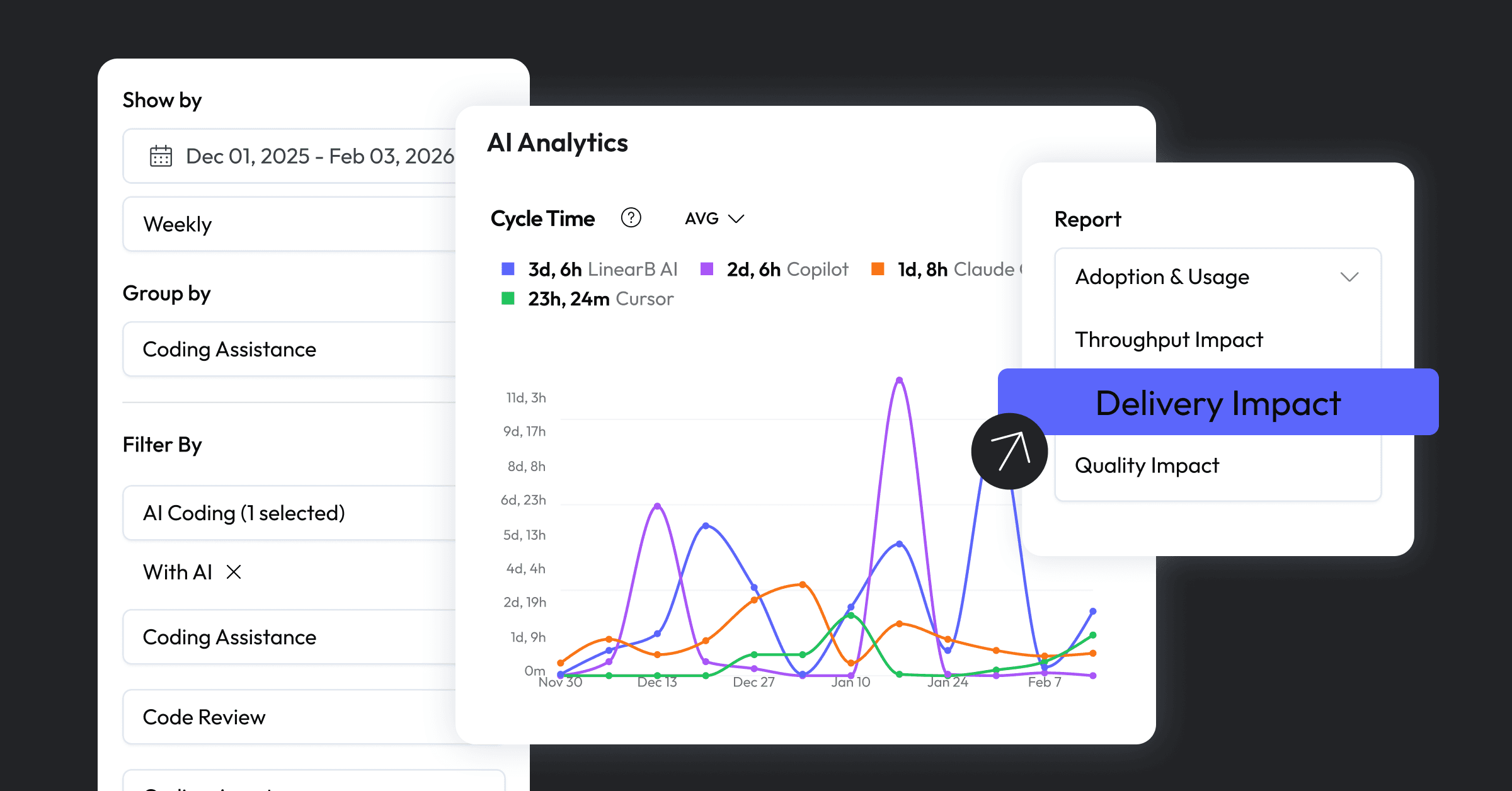

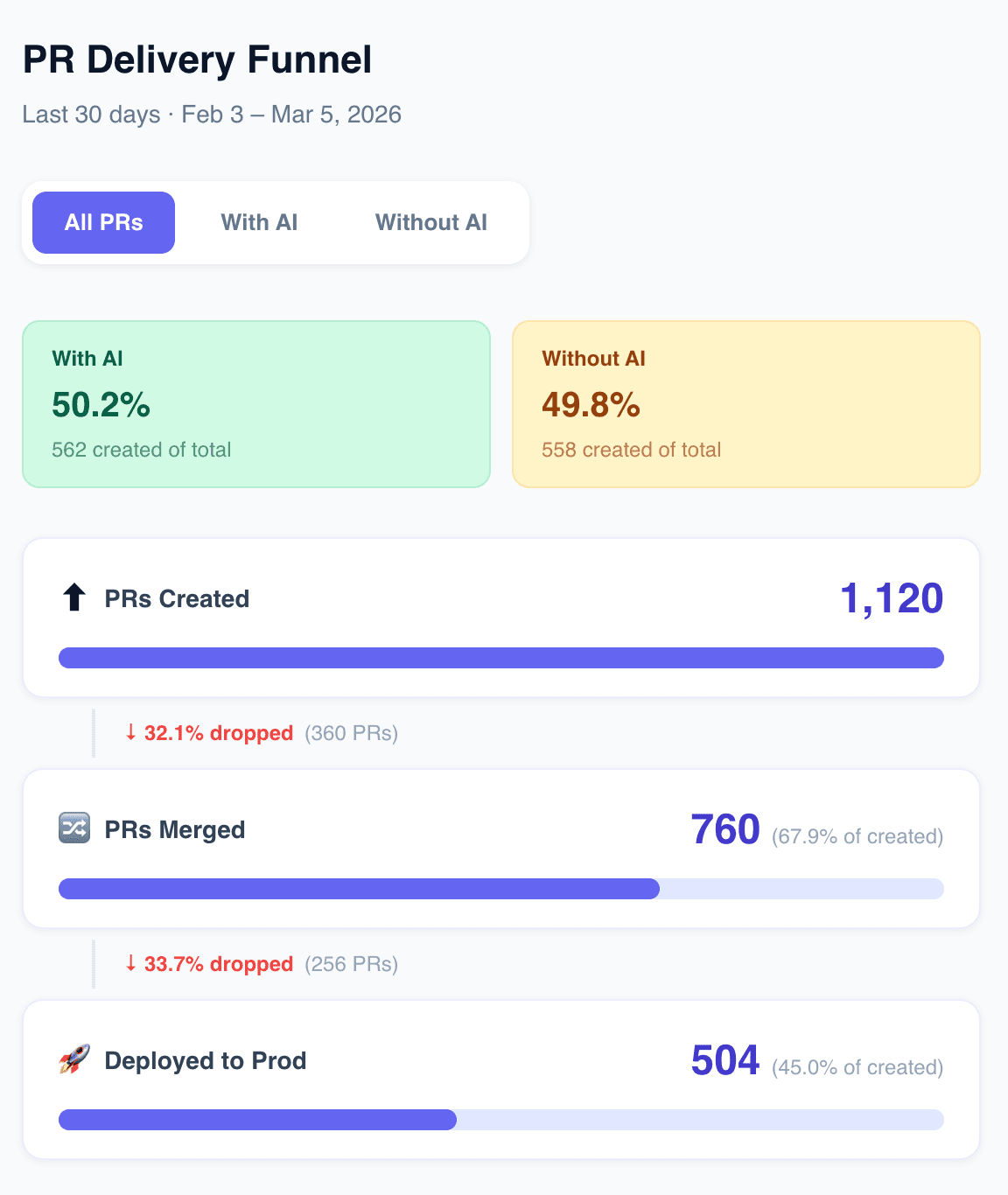

With a unified view of AI activities at a PR level, you can correlate them to outcomes that matter. Compare delivery, throughput, and quality metrics segmenting AI involvement, and filter by tool, workflows, repos, teams, and users to see where AI is making the most impact.

With AI Analytics, answer questions like:

- How much is our code AI-assisted?

- Is AI speeding up delivery, or just generating code faster while everything else stays slow?

- Is AI introducing more risk, rework, or quality issues?

- Which tools are adopted across the org?

Compare how AI-assisted work versus non-AI work side-by-side, break cycle time into stages to pinpoint exactly where AI is (or isn't) making a difference, track if AI is maintaining or degrading code health.

Using the MCP Server

And if you want to build custom dashboards on the fly or access recommendations on how to address bottlenecks, you can query AI impact metrics in natural language with the LinearB MCP Server. It connects to your full metrics suite so you can get answers fast.

Optimize engineering productivity: Measure, refine, repeat

Evaluate AI effectiveness on teams and actual delivery outcomes. You might find teams with high AI adoption but little improvement in throughput, signaling a need for better enablement or guardrails. You might discover AI tools improve throughput but are simply shifting work from coding to review and rework.

These insights let you answer whether your AI investments are working.

Turn metrics to decisions with the APEX framework

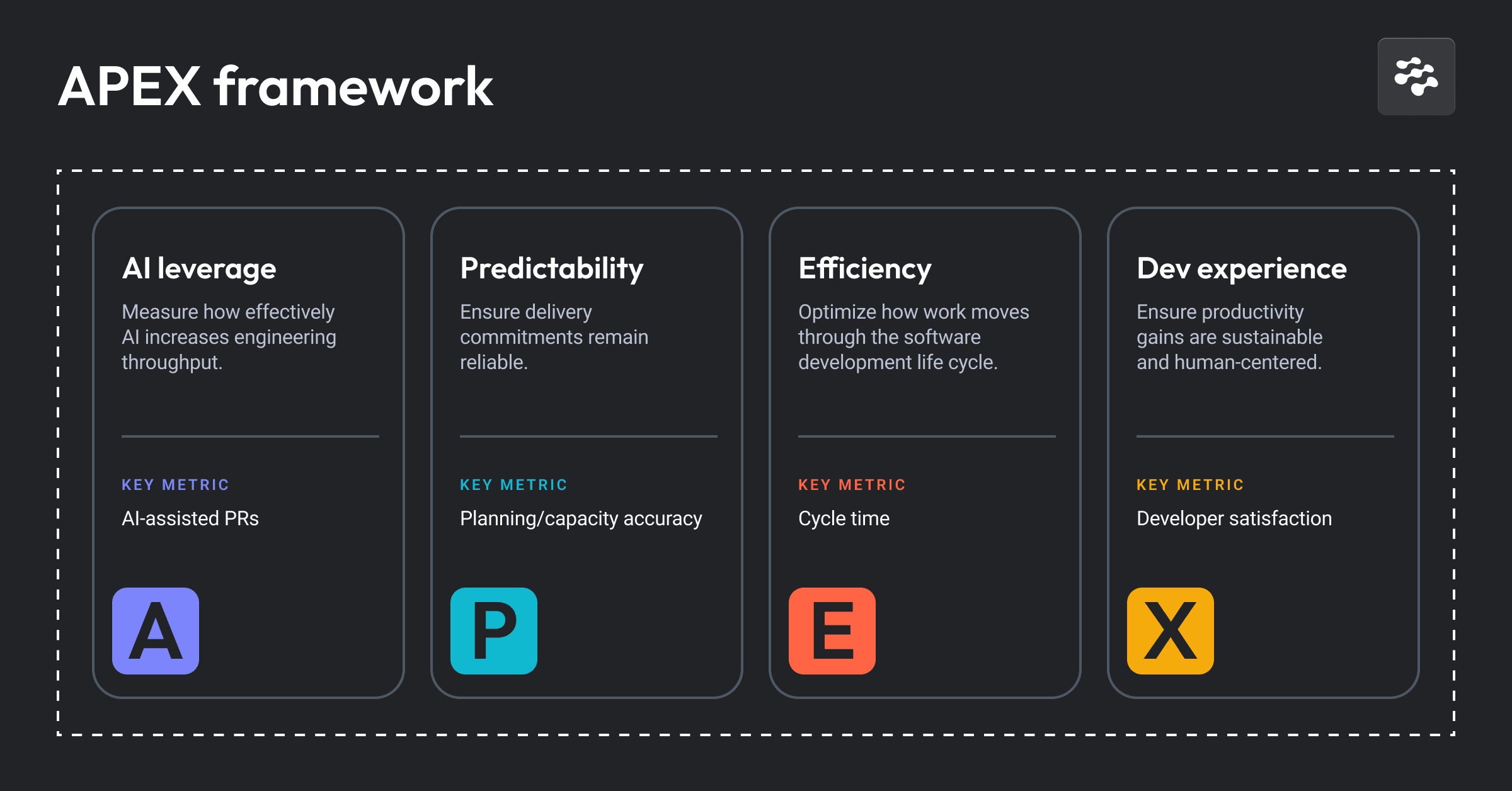

To help you act on these insights, apply our APEX framework, a system-level approach to measuring and scaling AI without sacrificing predictability or developer health. APEX defines four pillars:

- AI leverage: Measure how effectively AI and automations increase throughput.

- Predictability: Protect delivery commitments and reduce instability.

- Flow efficiency: Optimize how smoothly work moves from start to merge.

- Developer experience: Ensure productivity gains remain sustainable and human-centered.

Together, the framework signals whether AI improves throughput without eroding delivery confidence or burning out your team. APEX establishes a weekly, monthly, and quarterly cadence that turns metrics into action, so you can roll out AI faster and course-correct as you scale.

Explore the APEX framework to get the complete operating model and practical guidance on how to measure and prove sustainable AI impact.

More updates to explore

Beyond AI measurement, we also released several enhancements such as MCP Server OAuth support, configureable AI Code Review limits, and auto-sync for GitHub teams. Check out our release notes for more details.

Get started today

Start your free trial and register for our live demo this Thursday, Mar 26 to begin measuring and scaling AI impact for optimal developer productivity today. Put APEX into practice, break down data silos, and correlate AI activity directly to delivery outcomes.