We could argue that much of the progress that has been made recently in the software industry was due to a struggle to accommodate two seemingly conflicting goals: speed and quality. To remain competitive, companies need to deliver quality to their customers at intervals that keep getting shorter.

However, it’s no use shipping software fast if you’re shipping broken software.

So how can organizations adopt effective quality measures without slowing down their software delivery process?

The answer was, maybe unsurprisingly, automation. As in the use of automation to improve the software development process itself. Today, we’re covering one specific aspect of this: quality gates.

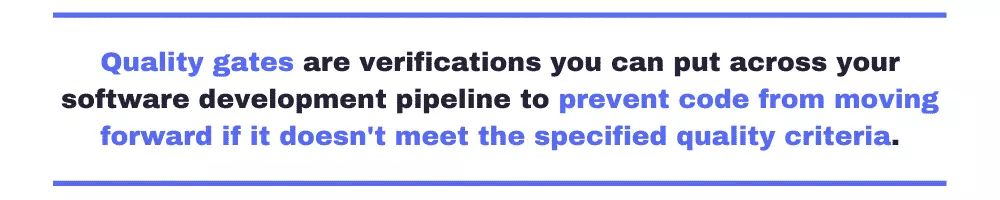

Quality gates are verifications you can put across your software development pipeline to prevent code from moving forward if it doesn’t meet the specified quality criteria. If the analyzed code is OK, it can go on until it reaches the next gate. On the other hand, if it doesn’t look that great, it stops there.

There’s more to it than that, though.

- What are the benefits of adopting quality gates?

- How do quality gates actually prevent faulty code from moving forward?

- What are some examples of real quality gates?

To learn the answer to these and more questions, keep reading.

What is a Quality Gate in Software Development?

As you’ve seen, we’ve already provided a simple definition of quality gates. However, we’ll now go a little deeper.

You’ll see that the definition of “quality gate” can actually be twofold. Firstly, you can have a general definition of quality gate, a little more abstract and subjective. On the other hand, you have the more concrete and objective quality gates, which are implemented by various tools.

Let’s now see the differences between these two.

Quality Gates as in “Best Practices”

You can think of quality gates as quality checkpoints in each software project phase. Every time the project is about to reach an important milestone, you might want to pause and verify whether the current result meets the expected standards.

Each time the project reaches a gate, it must be evaluated against the defined quality criteria. It then gets a status, which can be a binary option (either it passed or failed) or a more nuanced alternative (e.g., success/failure/warning).

The specific criteria used will, of course, vary depending on a number of factors, such as the type of the product or service in development, the size and nature of the organization, and more.

For instance, you certainly know some fields are highly regulated—finance and health care are the examples that come to mind. It makes sense that software projects for these industries have quality gates required for many legal aspects. Projects that aren’t as critical or regulated might have fewer quality gates, so they can get their products or services into the hands of customers sooner.

How does this kind of quality gate work?

Again, it varies. You might have anything from more informal approaches to a highly rigid checklist that requires sign-offs from the responsible stakeholders at each quality gate.

Quality Gates as in “Automated Checks”

As you’ve seen, the previous version of a quality gate is somewhat subjective in its definition and mostly manual in its application. Also, it’s more applicable at the project level.

Now, we’ll cover quality gates in the sense of automatic verifications done to the code. Unlike the previous one, this definition of quality gate is objective, automated, and usually applied at the code level.

So, in this context, a quality gate is an automated verification you can use to enforce the adherence to one or more quality standards. Like the previous type of quality gate, this one also preserves the metaphor. Think of it as an actual gate that prevents the code from going forward in the software development lifecycle (SDLC) pipeline if it doesn’t meet the defined quality criteria.

OK, but what exactly happens when code gets blocked by a quality gate?

Again, it depends. Things might vary according to the tool and configuration. All things being equal, the minimum you should aim for is a notification, via either email or messaging apps—e.g., Slack or similar.

You could, for instance, integrate quality gates into your pull request (PR) process. Every time an engineer submits a pull request, their code is checked against the defined quality gates.

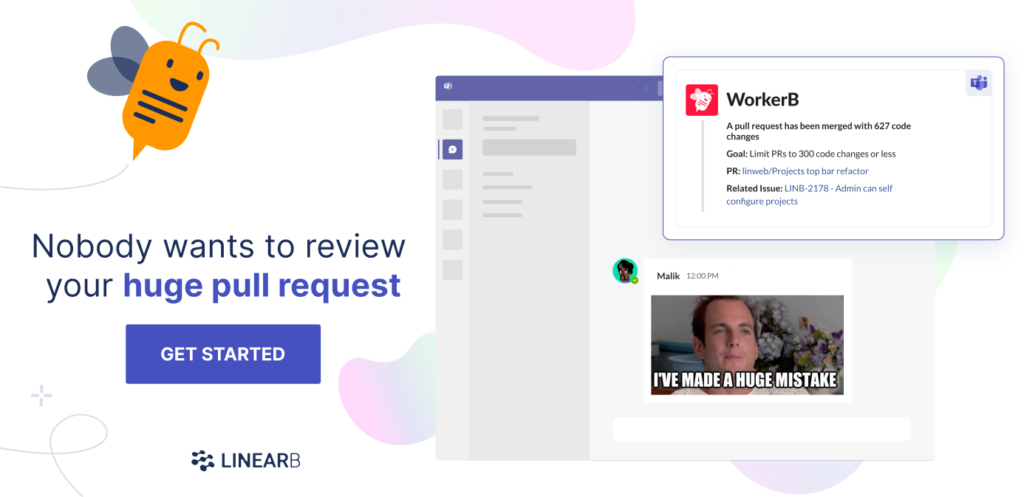

On example, might be PR size. If the team establishes a goal that PRs should not be more than 225 changes, our WorkerB bot can warn the team when a PR is too large. The reviewer can then decide whether to merge it or not.

Improve your code quality and developer workflow with smaller pull requests. Get started with your free-forever LinearB account today.

Improve your code quality and developer workflow with smaller pull requests. Get started with your free-forever LinearB account today.

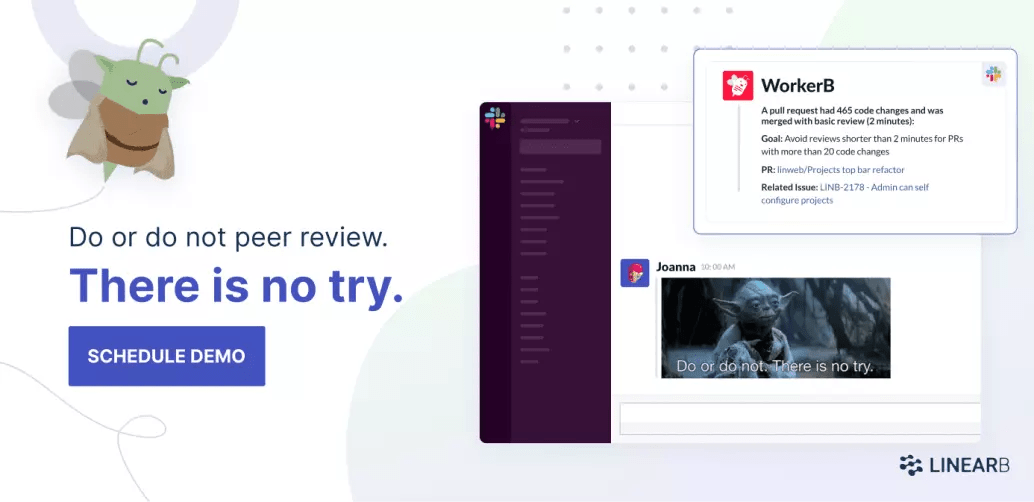

Another option might be the implementation of quality gates for basic reviews. Superficially “looks good to me” reviews can allow bad code to slip through the cracks. Our WorkerB bot can warn your team when a superficial review is about to be merged or has been merged, so you can assign an additional set of eyes to check for quality issues.

Avoid superficial LGTM reviews. Use Team Goals + WorkerB to reinforce good habits around review depth. Get started today!

Avoid superficial LGTM reviews. Use Team Goals + WorkerB to reinforce good habits around review depth. Get started today!

Would you want a more radical but also more guaranteed way to prevent code that fails quality gates from reaching production? Well, in that case, you’d probably want to configure your CI/CD (continuous integration / continuous deployment) software so the build fails when code doesn’t pass the gates.

Quality Gates: Here Are Some Real Examples

Before wrapping up, we’ll show some examples of real quality gates criteria, collected from various tools. That way, you get a real sense of what an automated quality gate process looks like and the type of feedback it can provide you.

- Code coverage: The percentage of code covered by automated unit tests.

- Branch coverage: A more specific version of code coverage that measures the percentage of execution paths covered by tests.

- Code coverage on new code: The same as the first example, but targeting only new code.

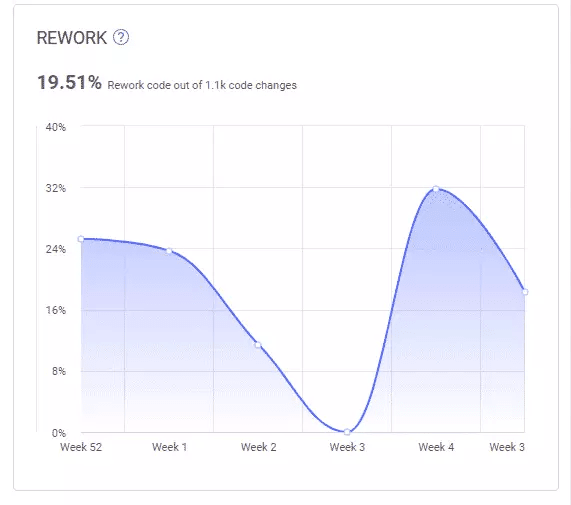

- Code churn: How soon and often code gets rewritten, which might be a red flag in various ways.

- Number of blocker issues: The number of critical issues in the code.

- Dependence or architectural rules broken: You might have architectural constraints on your code—e.g., classes from the “Domain” module can’t reference the “Presentation” module—and it might be valuable to ensure these aren’t broken.

Close the Door on Easy-to-Game Metrics …

The current zeitgeist in the software industry is that you have to go fast. The sooner—and more often—you deliver value to the customer, the better. While doing that, however, you’ve got to keep quality high. You must take measures to check the quality of your software output to prevent the shipping of code that isn’t up to standards.

At first sight, it might look like those two goals are contradictory, but they’re not. Through the combined forces of methodologies, processes, and tools, the modern software development industry has achieved the remarkable feat of allowing teams to go fast while not breaking things.

In this post, we’ve covered one of the pieces in the puzzle that makes this possible: quality gates. You’ve learned the definitions of quality gates, why you would want to use them, how they work, and even some real examples.

Finally, it’s important to bear in mind that when it comes to engineering metrics—quality gates and otherwise—you need to make sure the shot doesn’t backfire. Metrics can be gamed, especially when considered out of context and used as a target. So how do you ensure that doesn’t happen with your dev team?

… And Open the Gates to Software Quality

Our advice is to aim for the big picture. Don’t consider metrics in isolation.

Instead, consider adopting tools that can correlate metrics from various sources, such as your repository and your project management software. That way, you can have a more general view of the health of your project and dev team. You might be able to detect and mitigate project risk. And you’ll definitely learn in the process.

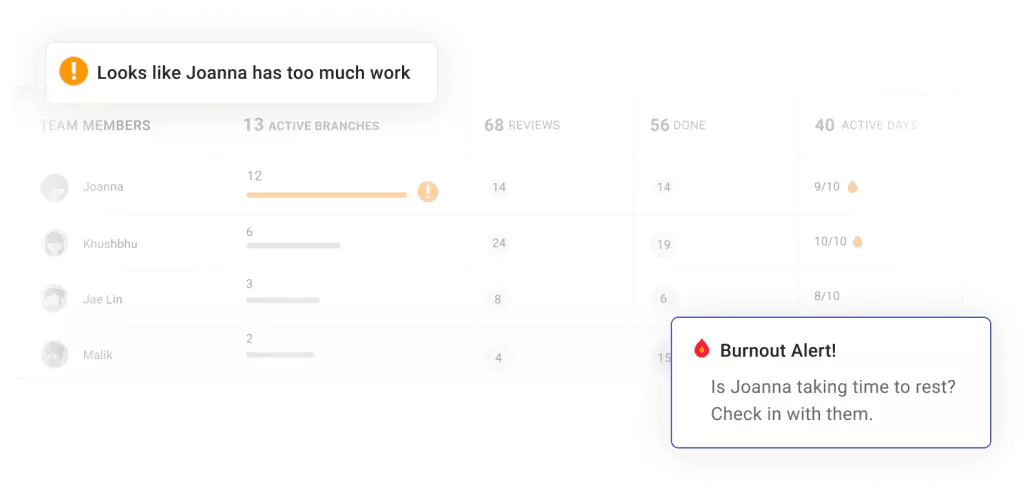

For instance, LinearB is a tool that can collect and correlate metrics from both your repositories and project management software, such as Jira. As a result, you get a very accurate view of the current state of your teams:

In the image above, taken from LinearB’s dashboard, you can see that the team member Joanna has too many active tasks and has worked 9 out of the last 10 days. Upon learning that, the team lead could decide to split the work more evenly between the other team members. Though such a metric might not fit the definition of code quality, it’s certainly a valuable measurement a team lead can use to improve the quality management and health of their applications.

The picture above is also from LinearB’s dashboard. It shows an important metric—rework, aka code churn—across iterations. Such a report is a great example of LinearB capabilities. It brings repository and project management metrics together. And as a result, it provides the user with a high-level view that can certainly act as a quality gate.

We invite you to book a demo of LinearB. Thanks for reading.