Build vs. buy

Summary

- AI-assisted coding makes the dashboard trivial; the remaining challenge is the unified data set underneath, which is what breaks at scale.

- Six systems fail by months 2–6: identity resolution, team structure, APIs, data aggregation, edge-case metrics, and AI code correlation.

- DIY dashboards lack four things: benchmarks, workflow automation, AI code governance, and sustainability after the owner leaves.

- Evaluate platforms by operating model, not metrics. The key is action—most DIY tools measure but rely on busy humans to act.

DIY engineering productivity dashboards fail within three to six months for the same reason every time. The dashboard was never the hard part. Identity resolution, team-structure drift, API maintenance across five or six developer tools, edge-case-aware computed metrics, and AI code attribution at the pull request level are the work. A unified, pre-aggregated data set is what makes any metric defensible across 18 months of history and an organization that keeps reorganizing. You can stand up a GitHub merge-frequency chart in a weekend. Producing a cycle-time number your CTO will present to the board requires a metrics warehouse, continuous reconciliation, and an operating model that closes the loop from insight to action.

This page lays out why homegrown engineering metrics tools break at scale, the 10 capabilities to evaluate in a production platform, and the 18-month durability question that should drive the build vs. buy decision as AI-generated code becomes an increasing share of your codebase. Data points throughout are drawn from the LinearB 2026 Software Engineering Benchmarks Report, built from 8.1+ million pull requests across 4,800 engineering teams in 42 countries. Written for engineering VPs and CTOs who have already tried the weekend build, or are about to.

The quick-build solves a fraction of the problem

AI-assisted development has made it accessible to stand up a basic metrics layer in days, but the visible dashboard represents only a small part of what a mature productivity platform delivers. Point an AI coding assistant at your GitHub API, write a few prompts, compute averages, and render charts. Within a week, you have visibility into merge frequency, open pull request (PR) counts, and rough throughput. The initial success creates a false sense of completion.

The easy questions are the only questions a weekend build answers: how many PRs merged this week, how long PRs stay open on average, and a rough code-churn rate. These are useful starting points. They are not a measurement program.

AI has made the dashboard itself trivial. The part that used to be hard is no longer hard. What remains, and what AI cannot shortcut, is the data set underneath. A dashboard renders whatever you feed it. The hard work is producing a unified, resolved, aggregated representation of how your organization delivers software. Dashboards are easy. The data set is the work, and that reframe is the through-line for everything that breaks in month two.

What to do next: list every question leadership has asked about delivery in the last 90 days. Count the ones your current dashboard answers without a human cross-checking a spreadsheet. The gap is your real scope.

Download your free copy of the guide

Six things that break early on

Before long, six separate systems degrade and convert your weekend win into an ongoing maintenance burden. Each failure is independent. Each one erodes trust in the numbers. By the time three of them hit in the same quarter, leadership stops believing the dashboard, and the internal tool enters the quiet decline that ends in abandonment.

1. Identity resolution

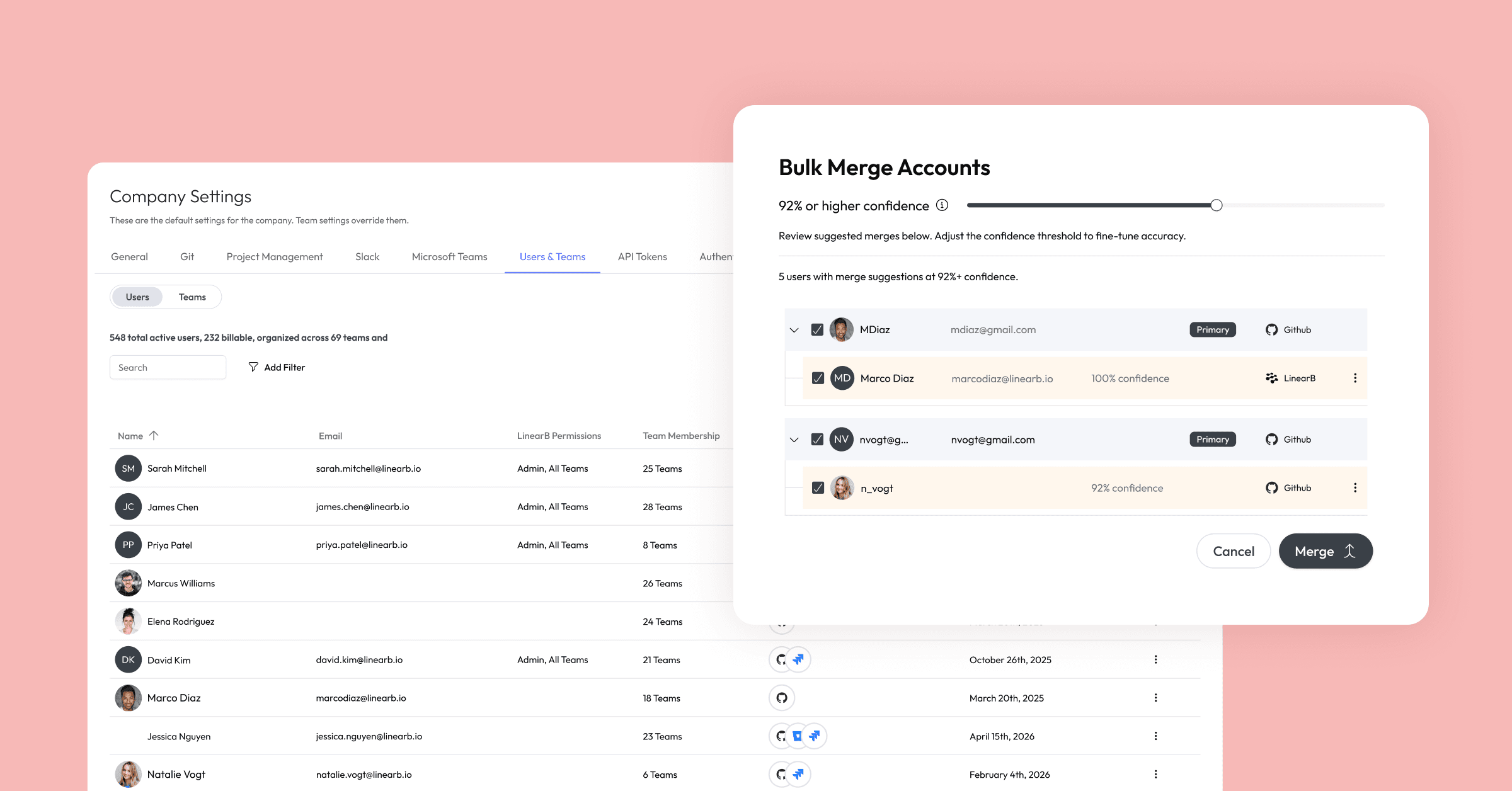

Your developers exist as different identities across every tool in your stack. The same person is john.smith@company.com in GitHub, j.smith@company.com in Jira, and jsmith in GitLab. A weekend script hardcodes a mapping table. That table breaks every time someone joins, leaves, changes their email, or gets absorbed through an acquisition.

Solving identity at scale requires continuous automated reconciliation across your source control, project management, CI/CD, and human resources (HR) systems, not a CSV someone updates on Fridays.

2. Team structure drift

Org charts change constantly. People move between teams mid-sprint. A static team configuration goes stale within weeks, and every team-level aggregation starts producing misleading results. Maintaining accurate team structures requires integration with HR and project management systems, plus logic for the edge cases your real-world structure produces: dotted-line reports, temporary working groups, contractors, and tiger teams.

3. API maintenance across five or six tools

GitHub, GitLab, Bitbucket, Jira, and Azure DevOps all have different APIs, rate limits, authentication models, pagination schemes, and breaking-change policies. Keeping five or six integrations healthy is a part-time engineering job. Every API version bump, every auth token expiration, every new tool your organization adopts becomes your team's problem.

4. Data aggregation at the metrics-warehouse level

Once data flows from your integrations, you end up with raw events scattered across tools, time periods, and formats. Turning that into metrics a team can use requires pre-computing aggregations at scale, unifying schemas so a PR merged event means the same thing across providers, and building the slice-and-dice layer that lets you query by team, project, repo, or time range.

A cycle time number for a single team over a single sprint is straightforward. A cycle time metric you can pivot by team, cohort, repo, and business unit, consistently, over 18 months of history, is a data platform. At that point you are building a metrics warehouse, and the effort scales with every new tool, every new team, and every new question leadership asks.

5. Edge-case-aware computed metrics

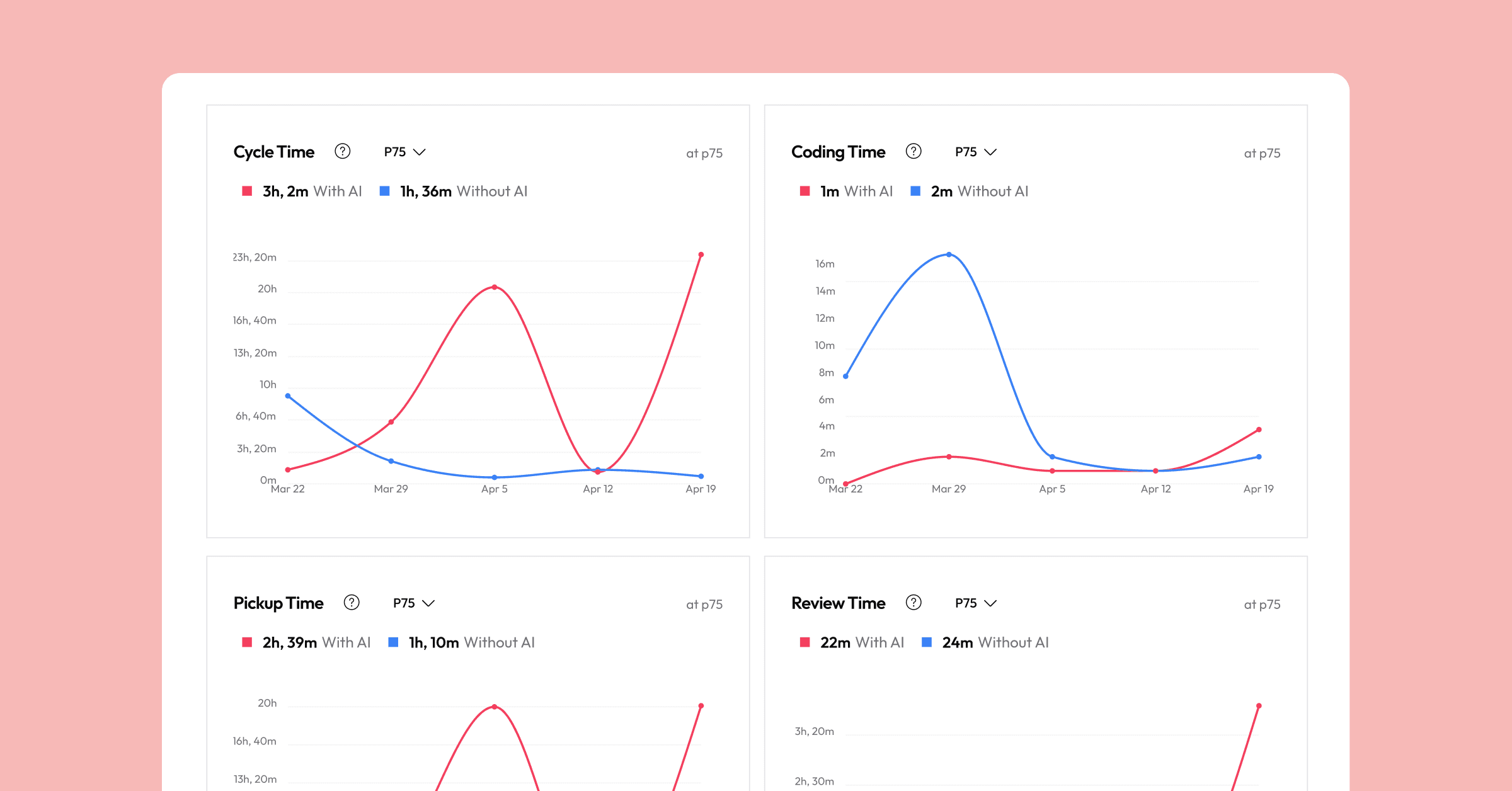

Cycle time seems straightforward until you calculate it accurately. A naive PR-opened-to-PR-merged timestamp misses draft periods, rework cycles, weekends and holidays, blocked states, and the distinction between active work time and idle time. The gap between rough approximation and reliable metric is enormous, and it compounds when you aggregate across teams or benchmark against external data.

6. AI code correlation at the PR level

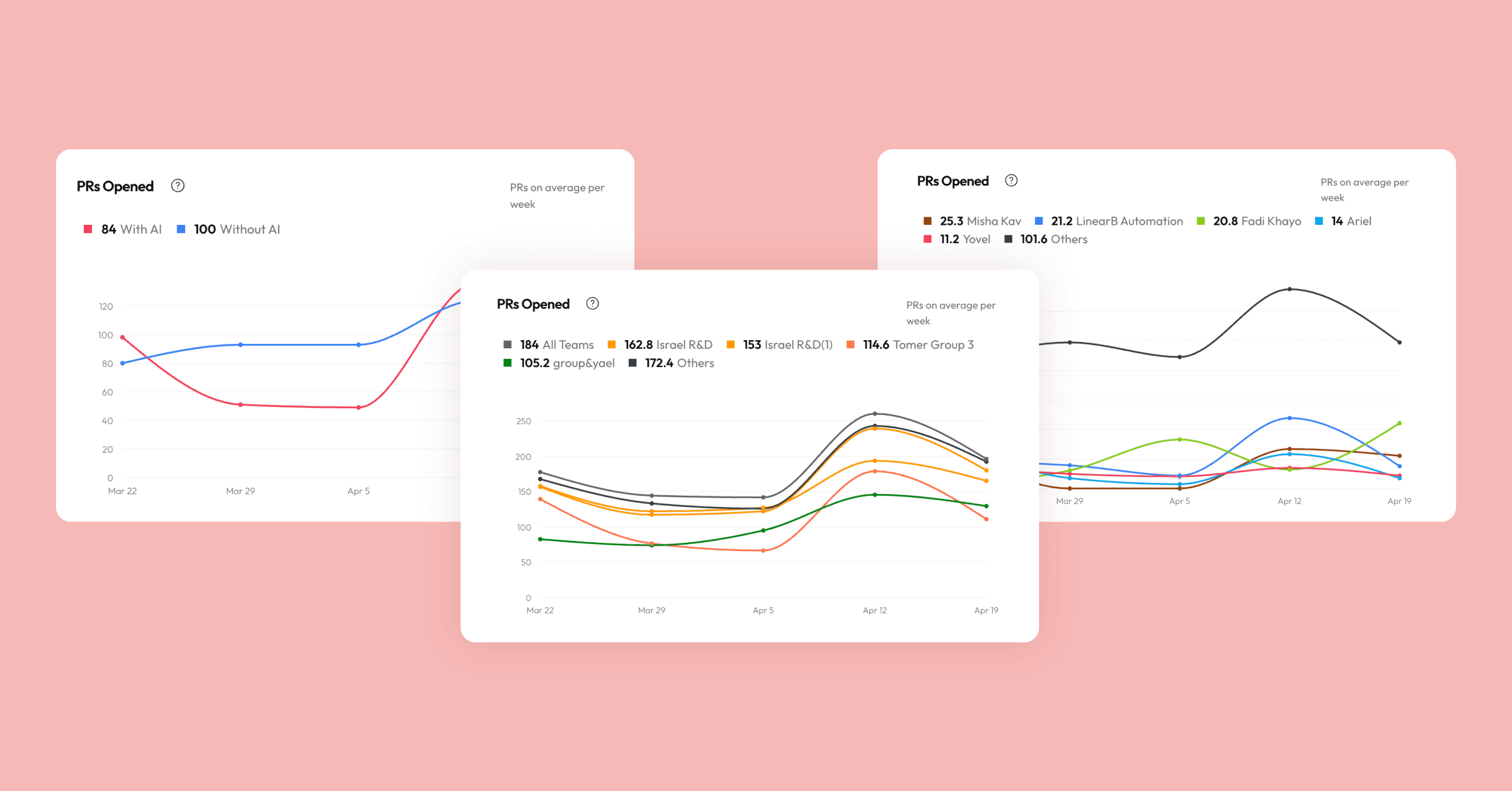

Knowing a developer accepted 200 GitHub Copilot suggestions yesterday tells you nothing about whether that code was committed, merged, or improved delivery outcomes. Even flagging whether a PR contains AI-generated code is harder than it looks. You cannot reliably identify AI activity on a pull request without pulling it directly from the originating tool's application programming interface (API), and most engineering teams now work across multiple AI coding assistants.

LinearB's 2026 Software Engineering Benchmarks Report, drawing on 8.1+ million pull requests across 4,800 engineering teams and 163,820 active contributors, quantifies the gap. At the 75th percentile, AI-assisted PRs run 2.6x larger than unassisted ones (408 vs. 157 lines of code), and Agentic AI PRs sit idle 5.3x longer before a reviewer picks them up (1,055 vs. 201 minutes). Seat-adoption counts will never surface that. PR-level correlation will.

Correlating AI activity to delivery outcomes requires fusing data at the commit level across multiple systems. Most homegrown tools never attempt this because the data model is too complex to build ad hoc. For a tool-by-tool breakdown of what this looks like in practice, see LinearB's analysis on measuring the impact of Copilot and Cursor on engineering productivity.

What to do next: audit which of these six systems your internal tool handles today with automation, not human intervention. Anything relying on a weekly manual reconciliation is on the path to decay.

Download your free copy of the guide

Four capabilities a DIY dashboard never reaches

Even with perfectly accurate data, a homegrown dashboard hits a hard ceiling because it remains passive. Leadership needs interventions and behavior change, not reporting. Without actionability, insights die in the gap between observation and action, and four capabilities specifically are the ones a DIY build never reaches.

1. External benchmarks

Accurate metrics without benchmarks leave you staring at numbers, unsure what to do next. You can see cycle time went up, but you cannot tell whether that is a crisis or a rounding error, whether your team is behind or ahead of peer teams, or where the biggest improvements lie.

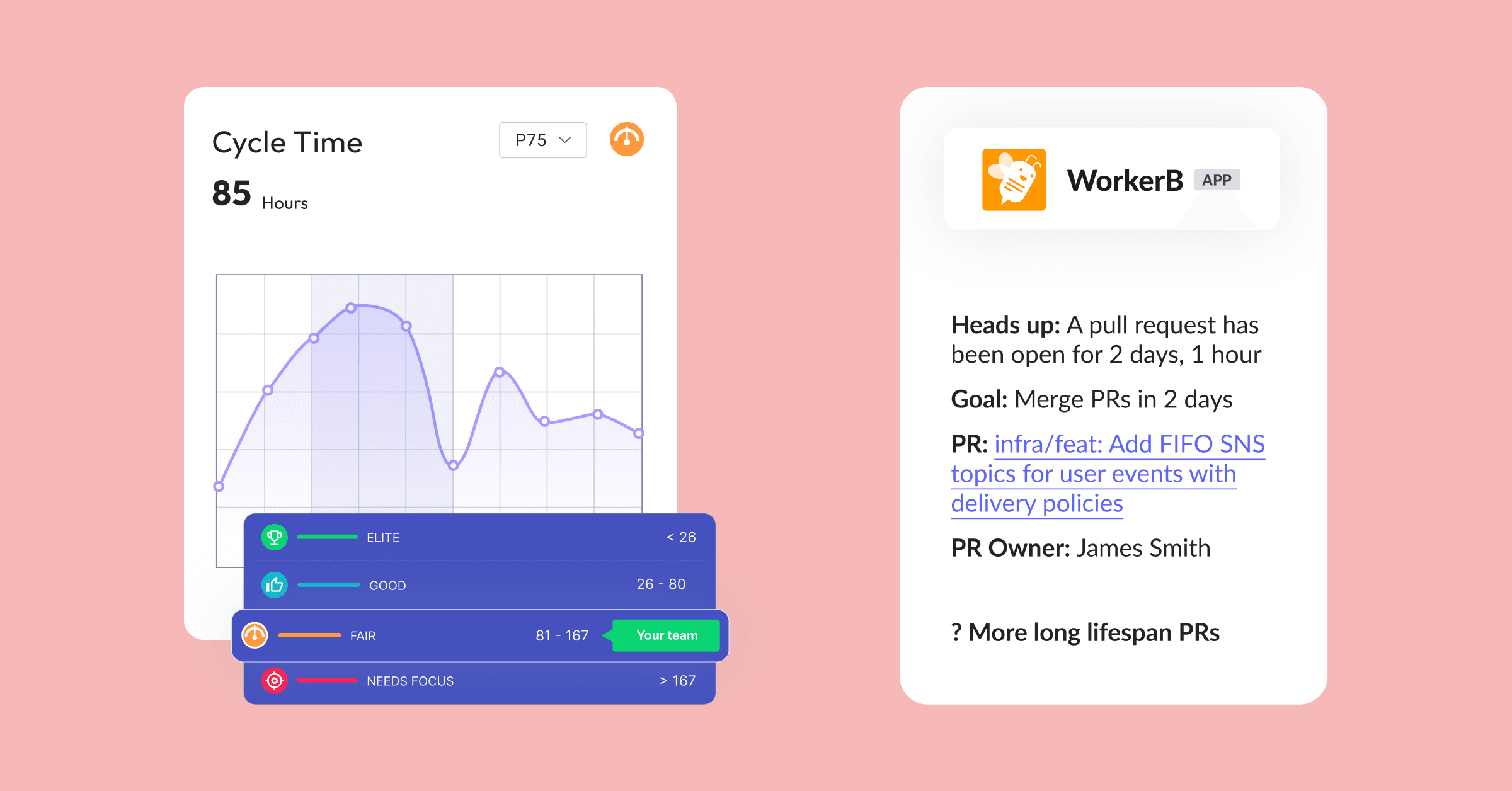

Benchmarks convert metrics into decisions. They tell you what good looks like and where to focus first, the prerequisite for any credible action plan. Building a benchmark dataset requires normalized data across thousands of engineering teams, consistent metric definitions that hold up across engineering cultures, and ongoing curation as the industry evolves. No internal build can replicate that.

2. Workflow automation and policy-as-code

A homegrown dashboard stops at the point of insight. You built a dashboard. Someone now has to look at it, interpret it, decide what to do, and manually follow through. There is no automated PR routing, no AI code review triggered by risk level, no policy-as-code enforcement, and no workflow automation. Every insight requires a human to act on it.

3. Independent AI code review and governance

As AI generates more of your code, review and quality standards need to keep pace. Homegrown dashboards lack a mechanism for independent AI code review, a way to ensure AI-generated code meets security and compliance standards before it merges, and a governance layer to manage AI's downstream impact on your delivery pipeline.

4. Sustainability after the owner leaves

Someone has to own the internal tool. When that person gets pulled onto a product priority, takes a new role, or leaves the company, the homegrown solution starts to rot. The pattern is consistent: the internal tool works for three to six months, falls behind on API changes, accumulates data quality issues, and gets abandoned. Production platforms are maintained by dedicated vendor teams, which is how they deliver value 18 months in.

What to do next: write down the last three metrics-driven decisions your leadership made. Trace each one from observation to intervention. If a human made every hand-off, your current tool is a reporting layer, not a platform.

Download your free copy of the guide

What to look for in a production engineering productivity platform

Evaluate platforms on operating model and business outcomes, not metric coverage or feature lists. The question is no longer which tool has the cleanest DORA (DevOps Research and Assessment) dashboard. The real question is which platform enables you to prove whether AI is increasing your engineering throughput without sacrificing delivery confidence, flow efficiency, or developer experience.

Ten capabilities to score every platform against

- Unified data foundation: Identity resolution, team-structure maintenance, and pre-computed aggregation across every tool in your stack. The prerequisite for every downstream metric.

- Tool-agnostic connectivity: Integrations with Git providers, project management, CI/CD, and every AI coding assistant your teams use, without lock-in to a single vendor ecosystem.

- Edge-case-aware computed metrics: Cycle time, change failure rate (CFR), rework, and predictability metrics that correctly handle draft periods, blocked states, weekends, and the active-vs-idle-time distinction.

- PR-level AI correlation: The ability to flag AI involvement on individual pull requests across GitHub Copilot, Cursor, Claude, and any other tool in play, with that activity connected to downstream delivery outcomes.

- External benchmarks: Industry reference points that convert internal metrics into decisions by showing what good looks like and where to focus first.

- Workflow automation and policy-as-code: PR routing, reviewer assignment, and enforceable gating at the point of merge.

- Independent AI code review and governance: Review and policy enforcement for AI-generated code, separate from the tool that produced it.

- Exploration and AI-native analysis: Natural language query, flexible dashboards, and an API that AI agents and internal tools can query directly.

- Multi-persona utility: Value for developers, managers, platform teams, and executives in one deployment.

- Enterprise readiness: Security certifications and controls to deploy at scale, including SOC 2, SSO, role-based access, and data residency options.

What to do next: turn the 10 capabilities into a one-page scorecard and run any platform you are evaluating against it before a demo. Filled versus empty dots will tell you more than a feature list.

Four outcome areas every evaluation should cover

Before comparing features between your DIY solution and a production platform, decide what outcomes you are trying to drive. These four outcome areas, as outlined in the APEX Framework, cover every credible reporting use case for engineering productivity.

AI leverage: whether your AI investments translate into delivery improvement, including adoption quality and downstream impact.

Predictability: how reliably teams deliver what they committed to, including project health and forecast accuracy.

Efficiency: how work flows through your system, including speed, throughput, and cycle time.

Developer experience: how your engineers feel about their work, and whether their experience contributes positively to productivity.

The operating model: explore, measure, act

The success of an engineering productivity platform rests on its ability to help you explore, measure, and act on what you discover, with action as the differentiator. Most DIY tools stop at measurement and leave every intervention to a human with a full schedule. That is the gap where most improvement initiatives die.

Explore

Answer new questions dynamically without pre-building every dashboard. You need flexibility to pivot as questions emerge, integration breadth so context lives in one place, and natural-language interfaces for AI-assisted analysis. Exploration surfaces the question worth asking.

Measure

Get accurate, benchmarked, edge-case-aware metrics across all four outcome areas. Computed metrics must account for draft periods, rework cycles, weekends, blocked states, and the distinction between active and idle time. External benchmarks give internal numbers context. Measurement tells you where to act and how urgently.

Act

Drive actual improvement with workflow automation and policy enforcement: PR routing, reviewer assignment, merge gating, AI code review, and automated alerts when teams are at risk. Action is the point of the entire exercise.

Action matters more than exploration or measurement because engineering teams do not lack dashboards. They lack effective interventions. A platform can surface the most accurate cycle time metric in the industry, benchmark it, and break it down by team, repo, and AI tool attribution. None of that matters if the platform cannot also route PRs intelligently, enforce review policies at merge time, or alert teams when a release is at risk.

Most DIY platforms stop at measurement. They produce reports and leave the operational work to humans who already have full schedules. The familiar pattern: leaders get the data, form an opinion, hold a meeting, assign a follow-up, and hope the behavior change sticks. It rarely does. Every manual step between insight and intervention is a step where the improvement can die.

What to do next: weight action capabilities disproportionately when scoring platforms. Exploration and measurement are table stakes.

Download your free copy of the guide

AI leverage is a first-class platform layer, not a data source

Platforms that treat AI as just another data source will underperform platforms that treat AI as a first-class part of the SDLC requiring measurement, review, and governance. AI coding tools are now mainstream across professional developers. GitHub's Octoverse report tracks the industry-wide rise in AI-assisted development and the explosion of generative-AI projects. As AI generates an increasing share of your code, the platform question moves from tracking adoption to shipping AI-assisted work safely at merge time.

Three capabilities to demand of any platform

- Real delivery impact beyond seat adoption. Knowing a team accepted 200 AI suggestions tells you little. Knowing which AI-assisted PRs merged, and whether those shipped faster than the baseline, is the real signal. See the AI measurement framework for engineering teams for how to structure that analysis end to end.

- Independent review of AI-generated code. A Claude or Cursor contribution that passes its own checks needs a second layer of review before merge, especially as AI-generated code grows to become most of your codebase.

- Governance of AI output at the point of merge. Governance means enforceable standards on AI-generated code, not advisory dashboards leaders can choose to ignore.

The governance case, in numbers: LinearB's 2026 Software Engineering Benchmarks Report introduced a new benchmark, Acceptance Rate, the percentage of PRs merged within 30 days, specifically because AI-generated code performs in a different range from manual code. Elite teams hit a 95% acceptance rate on manual PRs but only 71% on Agentic AI PRs, and most teams cannot exceed 60% on AI PRs. Heavier Agentic AI adoption is not translating into greater value delivery. That gap is what an AI-aware platform is built to close at merge time, and it is exactly the gap a DIY dashboard leaves open.

The 18-month durability question

The right decision frame for a multi-year platform choice is not today's dashboard. The right frame is which platform will still deliver value 18 months from now. By then, your AI strategy will have matured, your organization will have significantly more AI-generated code flowing through it, and leadership's questions will have moved beyond velocity into governance, quality, and business impact.

A platform that can explore, measure, and act across all four outcome areas today will continue to earn its place as those questions evolve. Platforms that deliver less will reveal their limits the moment your AI strategy enters its next phase.

A concrete example of what 18 months looks like: the LinearB 2026 Software Engineering Benchmarks Report introduced Acceptance Rate as a brand-new benchmark category this year. It did not exist in prior editions because AI-generated PRs did not need their own benchmark range. Your platform needs to track benchmark categories that have not been defined yet. By month 18, the measurement frame will shift again.

What to do next: map your expected AI code volume in 18 months, then ask whether your current tool could govern it at merge time. If the answer is no, the evaluation is simpler than it looks.

Frequently asked questions

Should we build or buy engineering productivity metrics?

Build the weekend version to understand the problem; buy the platform that carries you past month six. DIY dashboards answer 5% of the questions leadership asks in the first 90 days and then degrade as identity, team structure, API integrations, computed metrics, AI correlation, and owner attrition each break in turn. A production platform delivers benchmarks, workflow automation, AI code review, and enforceable governance that no internal build reaches.

Why do homegrown engineering dashboards fail at scale?

Homegrown dashboards fail because the dashboard was never the hard part. The unified data set underneath is. Identity mismatches, org-chart drift, breaking API changes across five or six tools, naive cycle-time math, and missing AI attribution converge within three to six months, and the internal tool gets abandoned when the engineer who owned it moves on.

What metrics should an engineering productivity platform cover?

An engineering productivity platform should cover four outcome areas: AI leverage (whether AI investments translate into delivery improvement), predictability (project health and forecast accuracy), efficiency (speed, throughput, cycle time), and developer experience (how engineers feel about their work). Every credible reporting use case fits inside these four outcomes.

How do I measure AI's impact on engineering delivery?

Measure AI's impact on engineering delivery at the pull request level, not by seat adoption. Flag AI involvement on each PR across every coding assistant your team uses, then correlate those PRs with downstream delivery outcomes: merge rate, cycle time, rework, and change failure rate. Seat counts tell you who logged in. PR-level attribution tells you whether AI made your delivery better.

What is policy-as-code for engineering productivity?

Policy-as-code for engineering productivity is enforceable gating at the point of merge, including automated review routing, required reviewers by risk level, AI code review triggers, and merge blocks for PRs that do not meet your defined standards. It is the mechanism that converts a metric into a behavior change without requiring a human to enforce it manually.

How long does a DIY engineering metrics dashboard last?

A DIY engineering metrics dashboard lasts three to six months on average before data quality issues, API drift, and owner attrition erode leadership trust in the numbers. After that point, your team either invests a second engineering sprint to rebuild it or migrates to a production platform.

The weekend build teaches you what matters; the production platform delivers it

If you have already built a homegrown dashboard, you are not starting from zero. You have the domain expertise to recognize what drives improvement, and that is exactly the advantage you need when evaluating platforms designed to solve the problems you have already hit.

The fundamental shift is from passive observation to active intervention. AI has moved the constraint downstream: code generation accelerates while review, testing, and governance struggle to keep pace. Most productivity gains disappear in the gap between AI writing more code and teams shipping more value. Closing that gap requires platforms that correlate AI adoption to delivery outcomes, enforce quality standards at merge time, and automate the workflow interventions that turn bottlenecks into throughput.

LinearB is an engineering productivity platform that enables engineering leaders to prove whether AI is increasing engineering throughput without sacrificing delivery confidence, flow efficiency, or a sustainable developer experience. Where homegrown dashboards answer surface-level questions and leave humans to interpret and act, LinearB connects AI tool activity to delivery impact, provides independent code review for AI-generated output, enforces governance policies at the point of merge, and automates PR routing and workflow decisions that eliminate manual bottlenecks. Whether code comes from developers, AI assistants, or autonomous agents, you get full visibility and control over how that code reaches production.

Written by Dan Lines, co-founder and COO at LinearB. Dan has spent his career scaling engineering organizations, from developer to VP of Engineering to founding LinearB.